Anthropic / Benj Edwards

On Thursday, Anthropic introduced Claude 3.5 Sonnet, its newest AI language mannequin and the primary in a brand new collection of “3.5” fashions that construct upon Claude 3, launched in March. Claude 3.5 can compose textual content, analyze knowledge, and write code. It incorporates a 200,000 token context window and is accessible now on the Claude web site and thru an API. Anthropic additionally launched Artifacts, a brand new characteristic within the Claude interface that reveals associated work paperwork in a devoted window.

To date, individuals exterior of Anthropic appear impressed. “This mannequin is de facto, actually good,” wrote unbiased AI researcher Simon Willison on X. “I believe that is the brand new finest total mannequin (and each sooner and half the worth of Opus, much like the GPT-4 Turbo to GPT-4o bounce).”

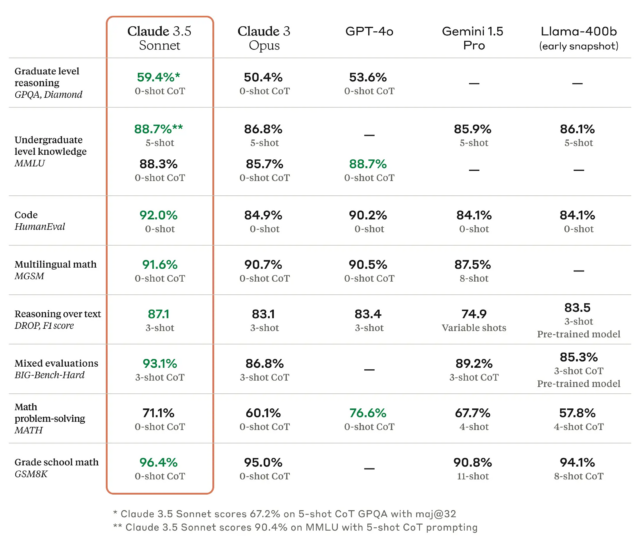

As we have written earlier than, benchmarks for big language fashions (LLMs) are troublesome as a result of they are often cherry-picked and sometimes don’t seize the texture and nuance of utilizing a machine to generate outputs on nearly any conceivable subject. However in response to Anthropic, Claude 3.5 Sonnet matches or outperforms competitor fashions like GPT-4o and Gemini 1.5 Professional on sure benchmarks like MMLU (undergraduate degree data), GSM8K (grade college math), and HumanEval (coding).

If all that makes your eyes glaze over, that is OK; it is significant to researchers however principally advertising and marketing to everybody else. A extra helpful efficiency metric comes from what we would name “vibemarks” (coined right here first!) that are subjective, non-rigorous combination emotions measured by aggressive utilization on websites like LMSYS’s Chatbot Enviornment. The Claude 3.5 Sonnet mannequin is presently below analysis there, and it is too quickly to say how nicely it should fare.

Claude 3.5 Sonnet additionally outperforms Anthropic’s previous-best mannequin (Claude 3 Opus) on benchmarks measuring “reasoning,” math abilities, basic data, and coding talents. For instance, the mannequin demonstrated sturdy efficiency in an inside coding analysis, fixing 64 p.c of issues in comparison with 38 p.c for Claude 3 Opus.

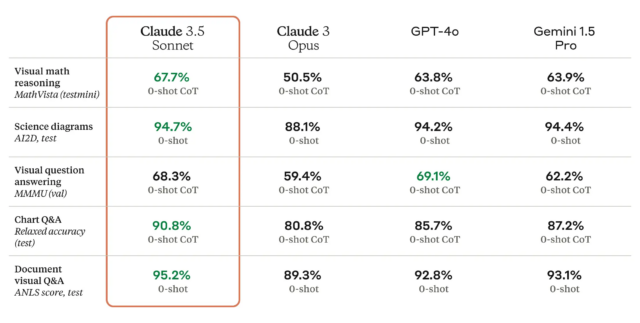

Claude 3.5 Sonnet can be a multimodal AI mannequin that accepts visible enter within the type of photographs, and the brand new mannequin is reportedly wonderful at a battery of visible comprehension exams.

Roughly talking, the visible benchmarks imply that 3.5 Sonnet is best at pulling info from photographs than earlier fashions. For instance, you’ll be able to present it an image of a rabbit sporting a soccer helmet, and the mannequin is aware of it is a rabbit sporting a soccer helmet and might speak about it. That is enjoyable for tech demos, however the tech continues to be not correct sufficient for purposes of the tech the place reliability is mission vital.