Generative AI fashions have seen super development, providing cutting-edge options for textual content era, summarization, code era, and query answering. Regardless of their versatility, these fashions typically wrestle when utilized to area of interest or domain-specific duties as a result of their pre-training is usually primarily based on giant, generalized datasets. To handle these gaps and maximize their utility in specialised eventualities, fine-tuning with domain-specific knowledge is crucial to spice up accuracy and relevance.

Meta’s newly launched Llama 3.2 sequence units a brand new benchmark in generative AI with its superior multimodal capabilities and optimized efficiency throughout numerous {hardware} platforms. The gathering spans light-weight fashions like Llama-3.2-1B and Llama-3.2-3B, which help as much as 128,000 tokens of context and are tailor-made for edge units. These fashions are perfect for on-device purposes similar to real-time summarization, instruction following, and multilingual textual content era. On the opposite finish of the spectrum, the bigger Llama-3.2-11B and Llama-3.2-90B fashions supply highly effective vision-enabled capabilities for duties similar to picture understanding, doc evaluation, and visible grounding. This enables for stylish use circumstances like producing captions for pictures, decoding advanced graphs, and reasoning over visible knowledge. As an example, the Meta Llama 3.2 fashions can analyze gross sales knowledge introduced in a graph to offer actionable insights or find particular objects on a map utilizing pure language directions.

On this put up, we exhibit how one can fine-tune Meta’s newest Llama 3.2 textual content era fashions, Llama 3.2 1B and 3B, utilizing Amazon SageMaker JumpStart for domain-specific purposes. Through the use of the pre-built options obtainable in SageMaker JumpStart and the customizable Meta Llama 3.2 fashions, you possibly can unlock the fashions’ enhanced reasoning, code era, and instruction-following capabilities to tailor them on your distinctive use circumstances. Whether or not you’re working in finance, healthcare, or some other specialised discipline, fine-tuning these fashions will mean you can bridge the hole between normal AI capabilities and domain-specific experience.

Answer overview

SageMaker JumpStart is a strong characteristic inside the SageMaker machine studying (ML) setting, providing practitioners a complete hub of publicly obtainable and proprietary basis fashions (FMs). This managed service accelerates the ML growth course of by offering entry to a rising listing of cutting-edge fashions from main mannequin hubs and suppliers. You possibly can rapidly consider, evaluate, and choose FMs primarily based on predefined high quality and duty metrics for duties similar to article summarization and picture era.

SageMaker JumpStart permits for full customization of pre-trained fashions to swimsuit particular use circumstances utilizing your personal knowledge. Deployment to manufacturing environments is streamlined by way of the person interface or SDK, enabling fast integration into purposes. The platform additionally helps organizational collaboration by permitting the sharing of artifacts, together with fashions and notebooks, to expedite mannequin constructing and deployment. Directors can handle the visibility of fashions inside the group, enhancing governance and safety.

Moreover, SageMaker JumpStart allows practitioners to deploy fashions to devoted SageMaker cases inside a network-isolated setting, sustaining compliance and knowledge safety. Through the use of the sturdy coaching and deployment capabilities obtainable in SageMaker, you possibly can customise and scale fashions to fulfill numerous ML necessities effectively.

Stipulations

To check out this answer utilizing SageMaker JumpStart, you’ll want the next stipulations:

Positive-tune Meta Llama 3.2 textual content era fashions

On this part, we exhibit how one can fine-tune Meta Llama 3.2 textual content era fashions. We’ll first have a look at the strategy of fine-tuning utilizing the SageMaker Studio UI with out having to jot down any code. We then additionally cowl how one can fine-tune the mannequin utilizing SageMaker Python SDK.

No-code fine-tuning utilizing the SageMaker Studio UI

SageMaker JumpStart offers entry to publicly obtainable and proprietary FMs from third-party and proprietary suppliers. Knowledge scientists and builders can rapidly prototype and experiment with numerous ML use circumstances, accelerating the event and deployment of ML purposes. It helps cut back the effort and time required to construct ML fashions from scratch, permitting groups to concentrate on fine-tuning and customizing the fashions for his or her particular use circumstances. These fashions are launched beneath totally different licenses designated by their respective sources. It’s important to evaluation and cling to the relevant license phrases earlier than downloading or utilizing these fashions to ensure they’re appropriate on your meant use case.

You possibly can entry the Meta Llama 3.2 FMs by way of SageMaker JumpStart within the SageMaker Studio UI and the SageMaker Python SDK. On this part, we cowl how one can uncover these fashions in SageMaker Studio.

SageMaker Studio is an IDE that gives a web-based visible interface for performing the ML growth steps, from knowledge preparation to mannequin constructing, coaching, and deployment. For directions on getting began and establishing SageMaker Studio, confer with Amazon SageMaker Studio.

- In SageMaker Studio, entry SageMaker JumpStart by selecting JumpStart within the navigation pane.

You’re introduced with the listing of public fashions supplied by SageMaker, the place you possibly can discover different fashions from different suppliers.

You’re introduced with the listing of public fashions supplied by SageMaker, the place you possibly can discover different fashions from different suppliers.

- To begin utilizing the Meta Llama 3.2 fashions, beneath Suppliers, select Meta.

You’re introduced with a listing of the fashions obtainable.

You’re introduced with a listing of the fashions obtainable.

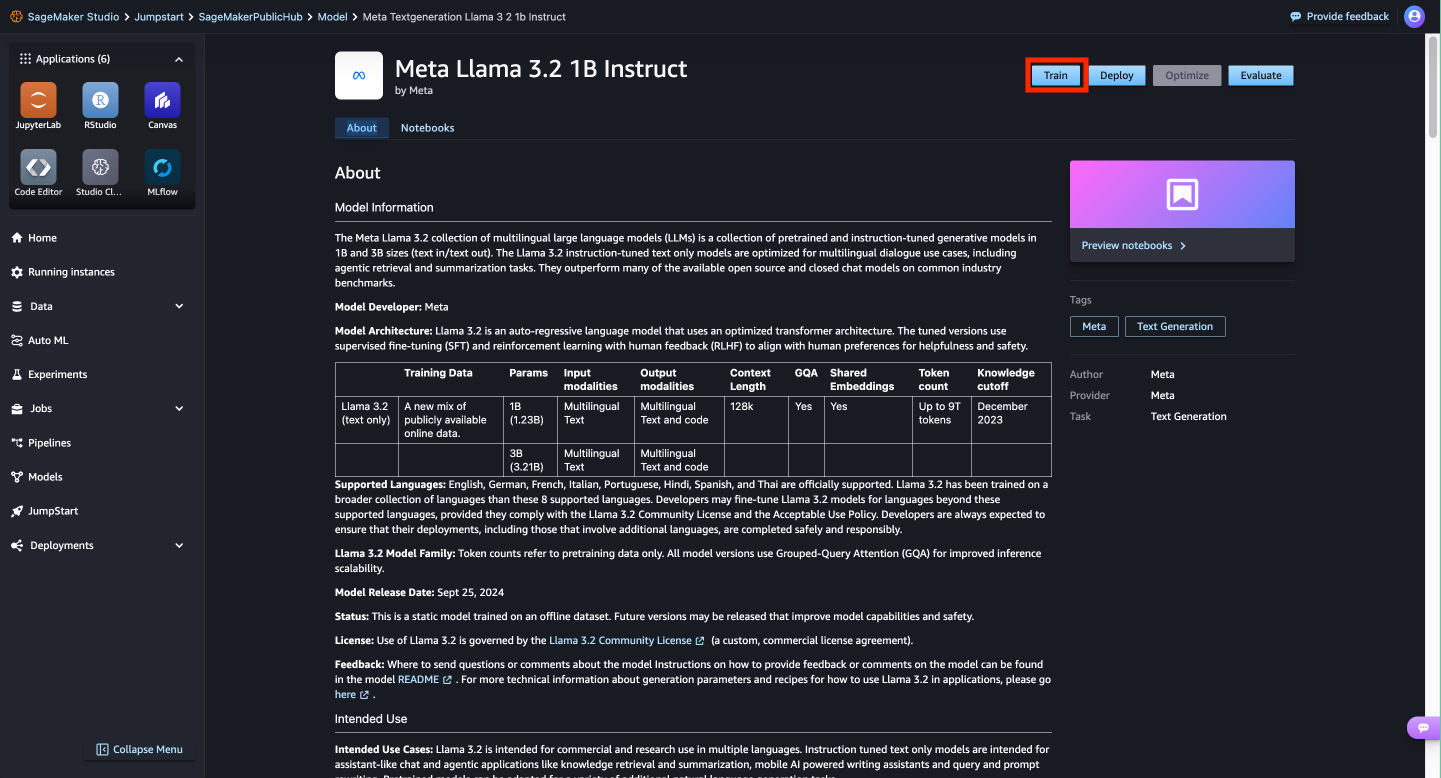

- Select the Meta Llama 3.2 1B Instruct mannequin.

Right here you possibly can view the mannequin particulars, in addition to practice, deploy, optimize, and consider the mannequin.

Right here you possibly can view the mannequin particulars, in addition to practice, deploy, optimize, and consider the mannequin.

- For this demonstration, we select Prepare.

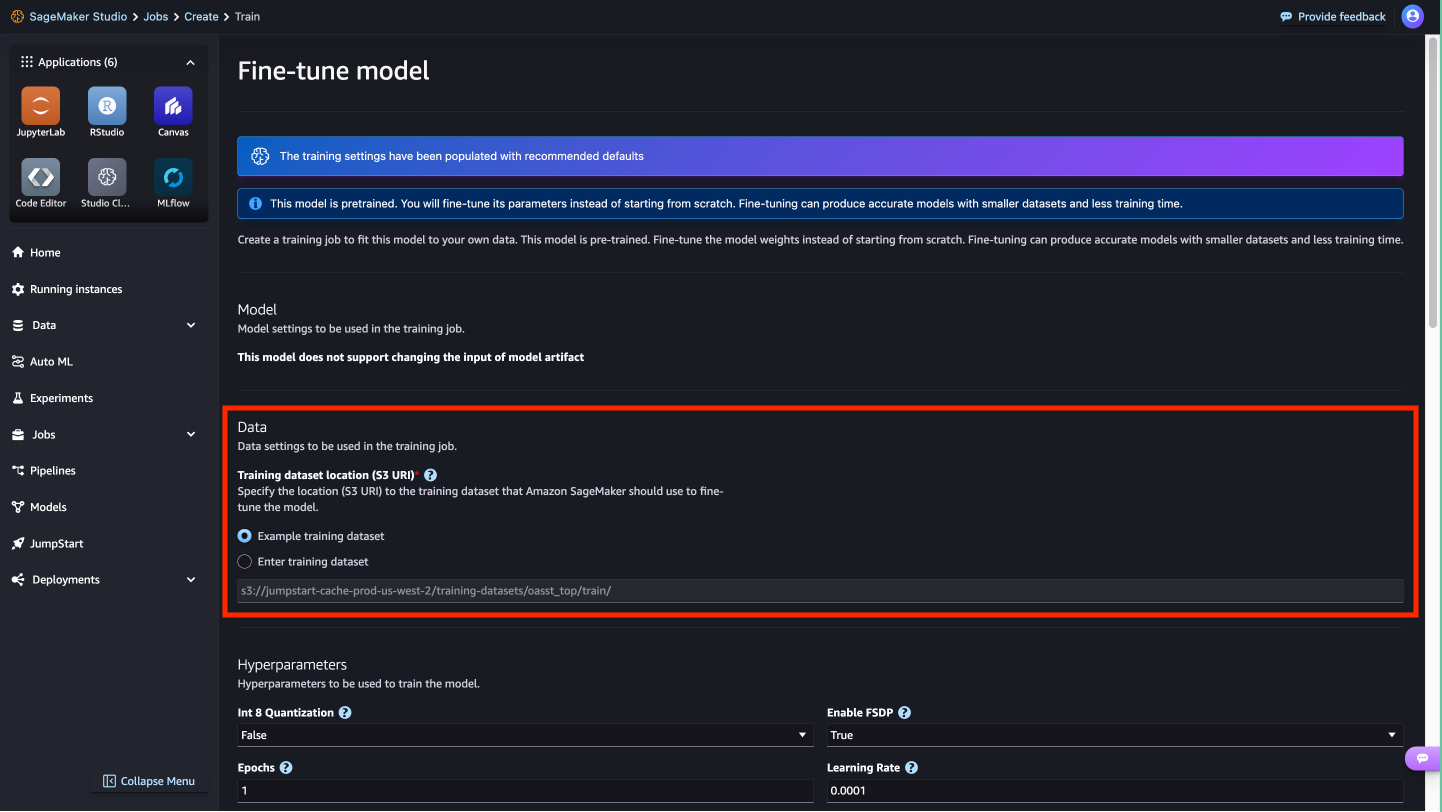

- On this web page, you possibly can level to the Amazon Easy Storage Service (Amazon S3) bucket containing the coaching and validation datasets for fine-tuning.

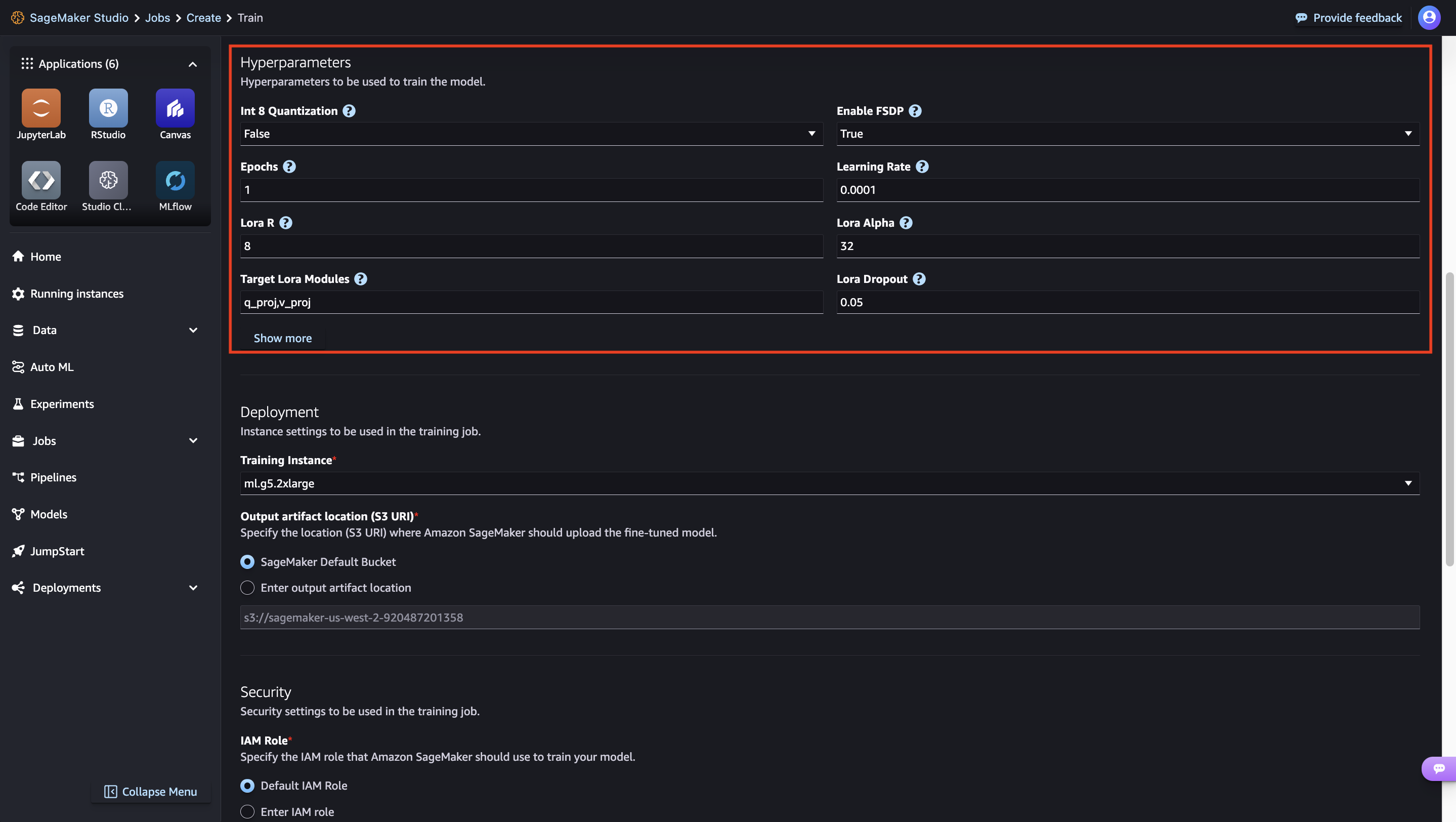

- As well as, you possibly can configure deployment configuration, hyperparameters, and safety settings for fine-tuning.

- Select Submit to begin the coaching job on a SageMaker ML occasion.

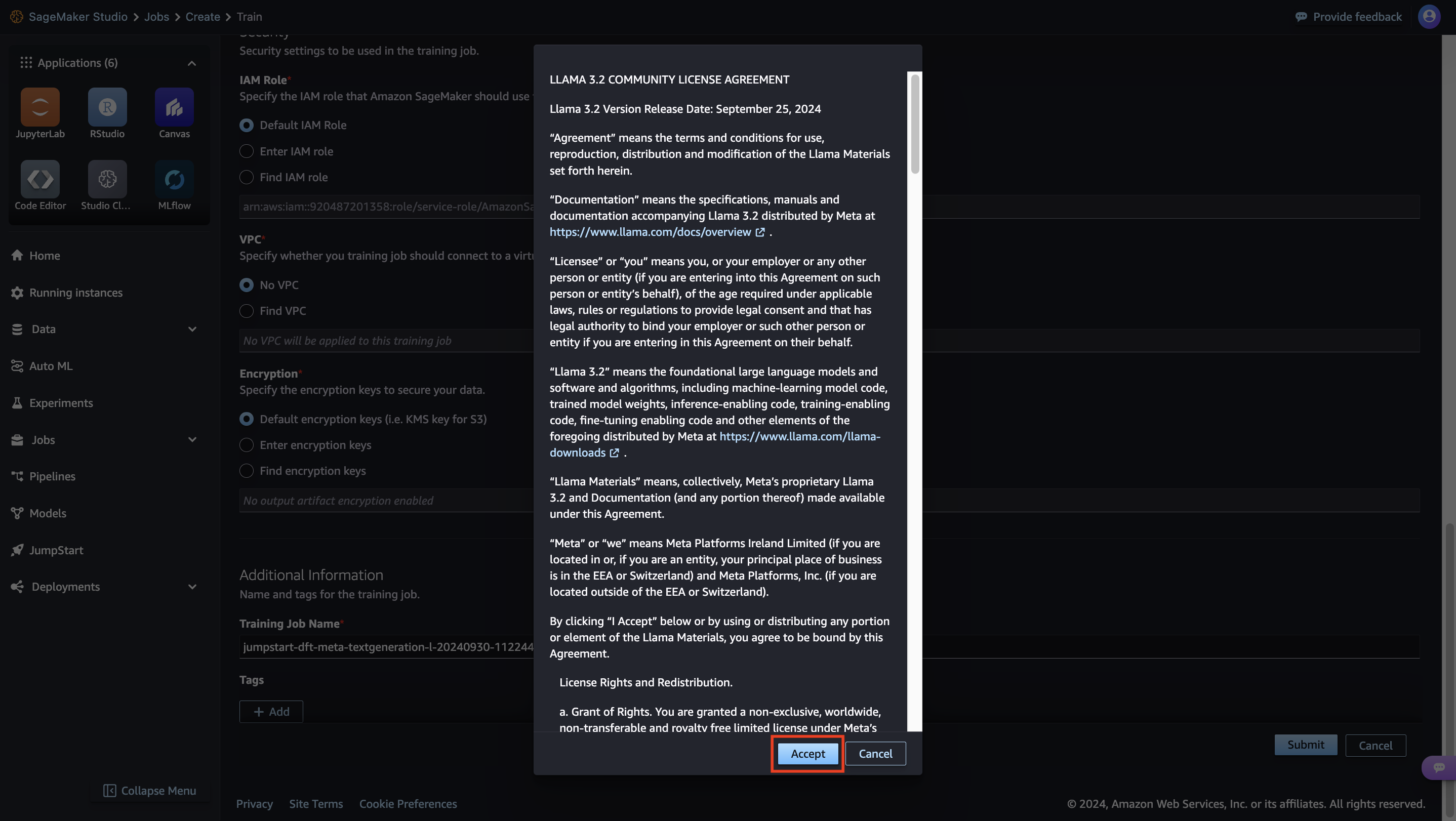

- Settle for the Llama 3.2 Group License Settlement to provoke the fine-tuning course of.

Deploy the mannequin

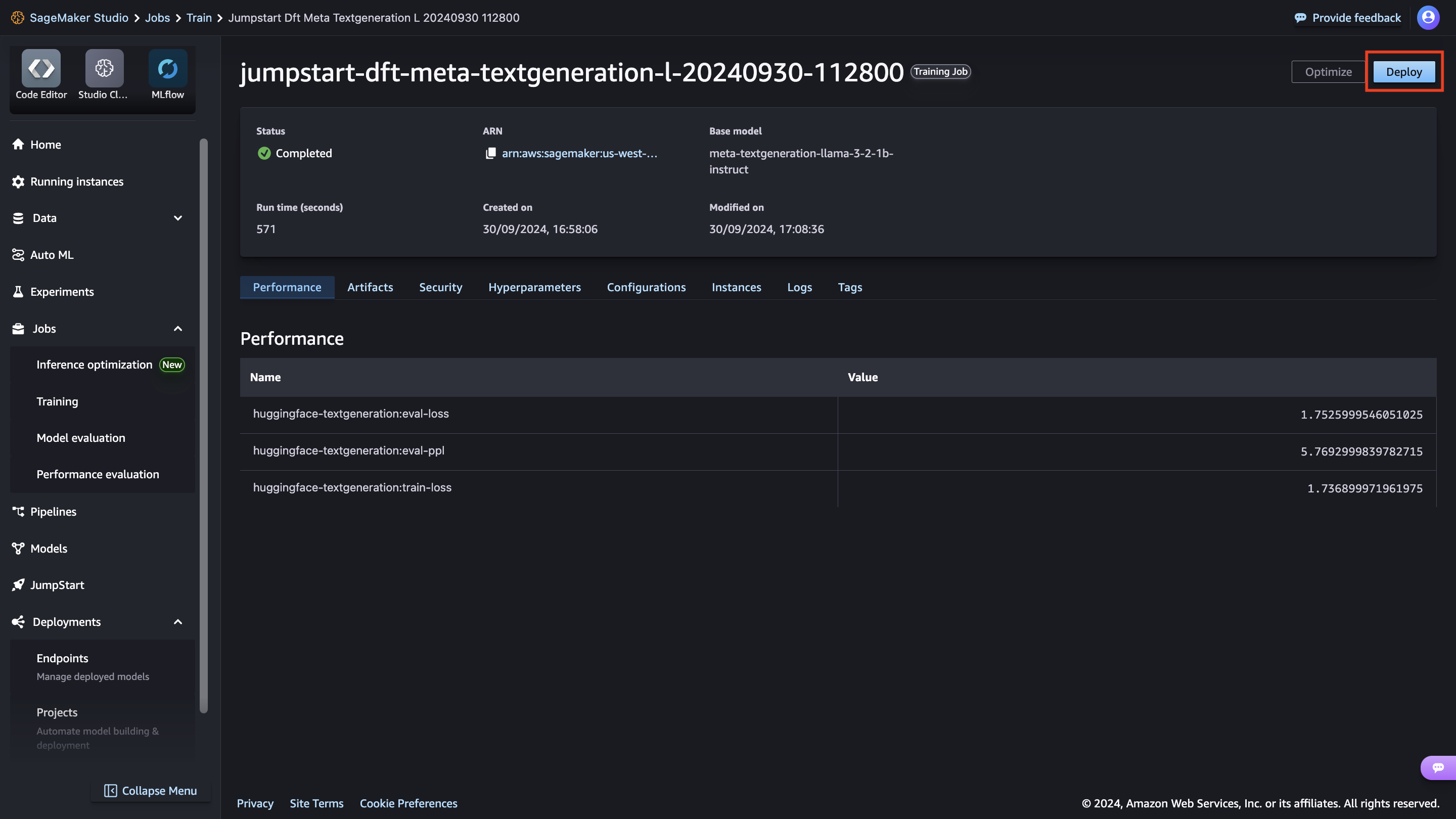

After the mannequin is fine-tuned, you possibly can deploy it utilizing the mannequin web page on SageMaker JumpStart. The choice to deploy the fine-tuned mannequin will seem when fine-tuning is completed, as proven within the following screenshot.

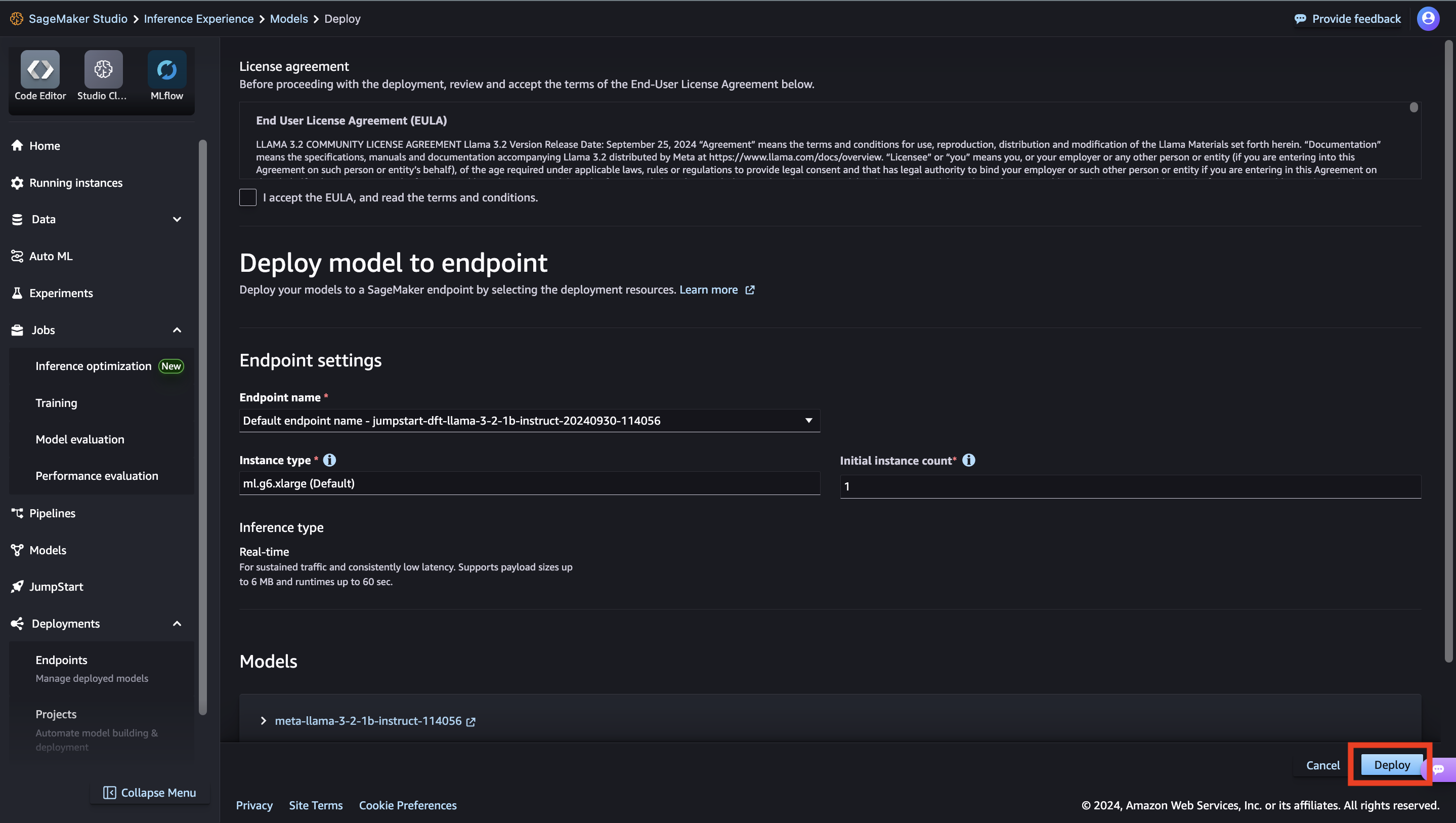

You can too deploy the mannequin from this view. You possibly can configure endpoint settings such because the occasion sort, variety of cases, and endpoint identify. You will want to simply accept the Finish Person License Settlement (EULA) earlier than you possibly can deploy the mannequin.

Positive-tune utilizing the SageMaker Python SDK

You can too fine-tune Meta Llama 3.2 fashions utilizing the SageMaker Python SDK. A pattern pocket book with the total directions will be discovered on GitHub. The next code instance demonstrates how one can fine-tune the Meta Llama 3.2 1B mannequin:

The code units up a SageMaker JumpStart estimator for fine-tuning the Meta Llama 3.2 giant language mannequin (LLM) on a customized coaching dataset. It configures the estimator with the specified mannequin ID, accepts the EULA, allows instruction tuning by setting instruction_tuned="True", units the variety of coaching epochs, and initiates the fine-tuning course of.

When the fine-tuning job is full, you possibly can deploy the fine-tuned mannequin immediately from the estimator, as proven within the following code. As a part of the deploy settings, you possibly can outline the occasion sort you wish to deploy the mannequin on. For the total listing of deployment parameters, confer with the deploy parameters within the SageMaker SDK documentation.

After the endpoint is up and working, you possibly can carry out an inference request towards it utilizing the predictor object as follows:

For the total listing of predictor parameters, confer with the predictor object within the SageMaker SDK documentation.

Positive-tuning method

Language fashions similar to Meta Llama are greater than 10 GB and even 100 GB in measurement. Positive-tuning such giant fashions requires cases with considerably larger CUDA reminiscence. Moreover, coaching these fashions will be very sluggish attributable to their measurement. Subsequently, for environment friendly fine-tuning, we use the next optimizations:

- Low-Rank Adaptation (LoRA) – This can be a sort of parameter environment friendly fine-tuning (PEFT) for environment friendly fine-tuning of enormous fashions. On this methodology, we freeze the entire mannequin and solely add a small set of adjustable parameters or layers into the mannequin. As an example, as a substitute of coaching all 3 billion parameters for Meta Llama 3.2 3B, we will fine-tune lower than 1% of the parameters. This helps considerably cut back the reminiscence requirement as a result of we solely must retailer gradients, optimizer states, and different training-related data for only one% of the parameters. Moreover, this helps cut back each coaching time and price. For extra particulars on this methodology, confer with LoRA: Low-Rank Adaptation of Massive Language Fashions.

- Int8 quantization – Even with optimizations similar to LoRA, fashions like Meta Llama 70B require important computational sources for coaching. To scale back the reminiscence footprint throughout coaching, we will make use of Int8 quantization. Quantization usually reduces the precision of the floating-point knowledge sorts. Though this decreases the reminiscence required to retailer mannequin weights, it may well doubtlessly degrade the efficiency attributable to lack of data. Nonetheless, Int8 quantization makes use of solely 1 / 4 of the precision in comparison with full-precision coaching, nevertheless it doesn’t incur important degradation in efficiency. As a substitute of merely dropping bits, Int8 quantization rounds the info from one sort to a different, preserving the important data whereas optimizing reminiscence utilization. To study Int8 quantization, confer with int8(): 8-bit Matrix Multiplication for Transformers at Scale.

- Absolutely Sharded Knowledge Parallel (FSDP) – This can be a sort of information parallel coaching algorithm that shards the mannequin’s parameters throughout knowledge parallel employees and may optionally offload a part of the coaching computation to the CPUs. Though the parameters are sharded throughout totally different GPUs, computation of every microbatch is native to the GPU employee. It shards parameters extra uniformly and achieves optimized efficiency by way of communication and computation overlapping throughout coaching.

The next desk compares totally different strategies with the 2 Meta Llama 3.2 fashions.

| Mannequin | JumpStart Mannequin IDs | Default Occasion Kind | Supported Situations Varieties for Positive-Tuning |

| Meta Llama 3.2 1B |

meta-textgeneration-llama-3-2-1b meta-textgeneration-llama-3-2-1b-instruct |

ml.g5.2xlarge |

ml.g5.2xlarge ml.g5.4xlarge ml.g5.8xlarge ml.g5.12xlarge ml.p3dn.24xlarge ml.g4dn.12xlarge ml.p5.48xlarge |

| Meta Llama 3.2 3B |

meta-textgeneration-llama-3-2-3b meta-textgeneration-llama-3-2-3b-instruct |

ml.g5.12xlarge |

ml.g5.12xlarge ml.g5.24xlarge ml.g5.48xlarge ml.p3dn.24xlarge ml.g4dn.12xlarge ml.p5.48xlarge |

Different occasion sorts might also work for fine-tuning. When utilizing p3 cases, coaching can be achieved with 32-bit precision as a result of bfloat16 is just not supported on these cases. Subsequently, the coaching job would eat double the quantity of CUDA reminiscence when coaching on p3 cases in comparison with g5 cases.

Coaching dataset format

SageMaker JumpStart presently help datasets in each area adaptation format and instruction tuning format. On this part, we specify an instance dataset in each codecs. For extra particulars, confer with the Dataset formatting part within the appendix.

Area adaption format

You possibly can fine-tune the Meta Llama 3.2 textual content era mannequin on domain-specific datasets, enabling it to generate related textual content and deal with numerous pure language processing (NLP) duties inside a selected area utilizing few-shot prompting. This fine-tuning course of includes offering the mannequin with a dataset particular to the goal area. The dataset will be in numerous codecs, similar to CSV, JSON, or TXT recordsdata. For instance, if you wish to fine-tune the mannequin for the area of monetary stories and filings, you possibly can present it with a textual content file containing SEC filings from an organization like Amazon. The next is an excerpt from such a submitting:

Instruction tuning format

In instruction fine-tuning, the mannequin is fine-tuned for a set of NLP duties described utilizing directions. This helps enhance the mannequin’s efficiency for unseen duties with zero-shot prompts. In instruction tuning dataset format, you specify the template.json file describing the enter and the output codecs and the practice.jsonl file with the coaching knowledge merchandise in every line.

The template.json file all the time has the next JSON format:

As an example, the next desk reveals the template.json and practice.jsonl recordsdata for the Dolly and Dialogsum datasets.

| Dataset | Use Case | template.json | practice.jsonl |

| Dolly | Query Answering | { “instruction”: “Who painted the Two Monkeys”, “context”: “Two Monkeys or Two Chained Monkeys is a 1562 portray by Dutch and Flemish Renaissance artist Pieter Bruegel the Elder. The work is now within the Gemäldegalerie (Portray Gallery) of the Berlin State Museums.”, “response”: “The 2 Monkeys or Two Chained Monkeys is a 1562 portray by Dutch and Flemish Renaissance artist Pieter Bruegel the Elder. The work is now within the Gemaeldegalerie (Portray Gallery) of the Berlin State Museums.” } | |

| Dialogsum | Textual content Summarization |

Supported hyperparameters for coaching

The fine-tuning course of for Meta Llama 3.2 fashions lets you customise numerous hyperparameters, every of which may affect components similar to reminiscence consumption, coaching pace, and the efficiency of the fine-tuned mannequin. On the time of scripting this put up, the next are the default hyperparameter values. For essentially the most up-to-date data, confer with the SageMaker Studio console, as a result of these values could also be topic to alter.

- int8_quantization – If

True, the mannequin is loaded with 8-bit precision for coaching. Default for Meta Llama 3.2 1B and Meta Llama 3.2 3B isFalse. - enable_fsdp – If

True, coaching makes use of FSDP. Default for Meta Llama 3.2 1B and Meta Llama 3.2 3B isTrue. - epoch – The variety of passes that the fine-tuning algorithm takes by way of the coaching dataset. Have to be an integer larger than 1. Default is 5.

- learning_rate – The speed at which the mannequin weights are up to date after working by way of every batch of coaching examples. Have to be a optimistic float larger than 0. Default is 0.0001.

- lora_r – LoRA R dimension. Have to be a optimistic integer. Default is 8.

- lora_alpha – LoRA Alpha. Have to be a optimistic integer. Default is 32.

- target_modules – Goal modules for LoRA fine-tuning. You possibly can specify a subset of

[‘q_proj’,’v_proj’,’k_proj’,’o_proj’,’gate_proj’,’up_proj’,’down_proj’]modules as a string separated by a comma with none areas. Default isq_proj,v_proj. - lora_dropout – LoRA dropout. Have to be a optimistic float between 0–1. Default is 0.05.

- instruction_tuned – Whether or not to instruction-train the mannequin or not. At most, one in all

instruction_tunedandchat_datasetwill beTrue. Have to beTrueorFalse. Default isFalse. - chat_dataset – If

True, dataset is assumed to be in chat format. At most, one in allinstruction_tunedandchat_datasetwill beTrue. Default isFalse. - add_input_output_demarcation_key – For an instruction tuned dataset, if that is

True, a demarcation key ("### Response:n") is added between the immediate and completion earlier than coaching. Default isTrue. - per_device_train_batch_size – The batch measurement per GPU core/CPU for coaching. Default is 4.

- per_device_eval_batch_size – The batch measurement per GPU core/CPU for analysis. Default is 1.

- max_train_samples – For debugging functions or faster coaching, truncate the variety of coaching examples to this worth. Worth -1 means utilizing all the coaching samples. Have to be a optimistic integer or -1. Default is -1.

- max_val_samples – For debugging functions or faster coaching, truncate the variety of validation examples to this worth. Worth -1 means utilizing all the validation samples. Have to be a optimistic integer or -1. Default is -1.

- seed – Random seed that can be set at the start of coaching. Default is 10.

- max_input_length – Most complete enter sequence size after tokenization. Sequences longer than this can be truncated. If -1, max_input_length is ready to the minimal of 1024 and the utmost mannequin size outlined by the tokenizer. If set to a optimistic worth,

max_input_lengthis ready to the minimal of the supplied worth and themodel_max_lengthoutlined by the tokenizer. Have to be a optimistic integer or -1. Default is -1. - validation_split_ratio – If validation channel is

None, ratio of train-validation cut up from the practice knowledge have to be between 0–1. Default is 0.2. - train_data_split_seed – If validation knowledge is just not current, this fixes the random splitting of the enter coaching knowledge to coaching and validation knowledge utilized by the algorithm. Have to be an integer. Default is 0.

- preprocessing_num_workers – The variety of processes to make use of for preprocessing. If

None, the primary course of is used for preprocessing. Default isNone.

Occasion sorts and suitable hyperparameters

The reminiscence requirement throughout fine-tuning might differ primarily based on a number of components:

- Mannequin sort – The 1B mannequin has the smallest GPU reminiscence requirement and the 3B mannequin has a better reminiscence requirement

- Max enter size – A better worth of enter size results in processing extra tokens at a time and as such requires extra CUDA reminiscence

- Batch measurement – A bigger batch measurement requires bigger CUDA reminiscence and due to this fact requires bigger occasion sorts

- Int8 quantization – If utilizing Int8 quantization, the mannequin is loaded into low precision mode and due to this fact requires much less CUDA reminiscence

That will help you get began, we offer a set of mixtures of various occasion sorts, hyperparameters, and mannequin sorts that may be efficiently fine-tuned. You possibly can choose a configuration as per your necessities and availability of occasion sorts. We fine-tune each two fashions on a wide range of settings with three epochs on a subset of the Dolly dataset with summarization examples.

The outcomes for fine-tuning the fashions are proven within the appendix on the finish of this put up. As we will see from these outcomes, fine-tuning improves summarization in comparison with non-fine-tuned fashions.

Meta Llama 3.2 1B fine-tuning with numerous hyperparameters

The next desk summarizes the totally different hyperparameters for fine-tuning Meta Llama 3.2 1B.

| Occasion Kind | Max Enter Size | Per Gadget Coaching Batch Dimension | Int8 Quantization | Allow FSDP | Time Taken (Minutes) |

| ml.g5.2xlarge | 1024 | 4 | FALSE | TRUE | 11.3 |

| ml.g5.2xlarge | 1024 | 8 | FALSE | TRUE | 11.12 |

| ml.g5.2xlarge | 1024 | 4 | FALSE | FALSE | 14.55 |

| ml.g5.2xlarge | 2048 | 4 | FALSE | TRUE | 10.95 |

| ml.g5.2xlarge | 1024 | 4 | TRUE | FALSE | 17.82 |

| ml.g5.2xlarge | 2048 | 4 | TRUE | FALSE | 17.4 |

| ml.g5.2xlarge | 1024 | 8 | TRUE | FALSE | 16.97 |

| ml.g5.4xlarge | 1024 | 8 | FALSE | TRUE | 11.28 |

| ml.g5.4xlarge | 1024 | 4 | FALSE | TRUE | 11.48 |

| ml.g5.4xlarge | 2048 | 4 | FALSE | TRUE | 11.27 |

| ml.g5.4xlarge | 1024 | 4 | FALSE | FALSE | 14.8 |

| ml.g5.4xlarge | 1024 | 4 | TRUE | FALSE | 17.38 |

| ml.g5.4xlarge | 1024 | 8 | TRUE | FALSE | 16.63 |

| ml.g5.4xlarge | 2048 | 4 | TRUE | FALSE | 16.8 |

| ml.g5.8xlarge | 1024 | 4 | FALSE | TRUE | 11.12 |

| ml.g5.8xlarge | 2048 | 4 | FALSE | TRUE | 10.87 |

| ml.g5.8xlarge | 1024 | 8 | FALSE | TRUE | 10.88 |

| ml.g5.8xlarge | 1024 | 4 | FALSE | FALSE | 14.47 |

| ml.g5.8xlarge | 1024 | 4 | TRUE | FALSE | 17.82 |

| ml.g5.8xlarge | 1024 | 8 | TRUE | FALSE | 17.13 |

| ml.g5.8xlarge | 2048 | 4 | TRUE | FALSE | 17.13 |

| ml.g5.12xlarge | 2048 | 4 | FALSE | FALSE | 14.72 |

| ml.g5.12xlarge | 1024 | 4 | FALSE | TRUE | 10.45 |

| ml.g5.12xlarge | 1024 | 8 | TRUE | FALSE | 17.23 |

| ml.g5.12xlarge | 1024 | 8 | FALSE | FALSE | 14.03 |

| ml.g5.12xlarge | 1024 | 4 | FALSE | FALSE | 14.22 |

| ml.g5.12xlarge | 1024 | 4 | TRUE | FALSE | 18.07 |

| ml.g5.12xlarge | 2048 | 4 | TRUE | FALSE | 18.15 |

| ml.g5.12xlarge | 2048 | 4 | FALSE | TRUE | 8.45 |

| ml.g5.12xlarge | 1024 | 8 | FALSE | TRUE | 8.87 |

| ml.g4dn.12xlarge | 1024 | 8 | FALSE | TRUE | 21.15 |

| ml.g4dn.12xlarge | 1024 | 4 | TRUE | FALSE | 35.12 |

| ml.g4dn.12xlarge | 1024 | 4 | FALSE | TRUE | 22.42 |

| ml.g4dn.12xlarge | 1024 | 4 | FALSE | FALSE | 34.62 |

| ml.g4dn.12xlarge | 2048 | 4 | FALSE | TRUE | 23.25 |

Meta Llama 3.2 3B fine-tuning with numerous hyper parameters

The next desk summarizes the totally different hyperparameters for fine-tuning Meta Llama 3.2 3B.

| Occasion Kind | Max Enter Size | Per Gadget Coaching Batch Dimension | Int8 Quantization | Allow FSDP | Time Taken (Minutes) |

| ml.g5.12xlarge | 1024 | 8 | TRUE | FALSE | 29.18 |

| ml.g5.12xlarge | 2048 | 4 | TRUE | FALSE | 29.8 |

| ml.g5.12xlarge | 1024 | 4 | FALSE | FALSE | 26.2 |

| ml.g5.12xlarge | 1024 | 8 | FALSE | TRUE | 12.88 |

| ml.g5.12xlarge | 2048 | 4 | FALSE | TRUE | 11.8 |

| ml.g5.12xlarge | 1024 | 4 | FALSE | TRUE | 14.98 |

| ml.g5.12xlarge | 1024 | 4 | TRUE | FALSE | 30.05 |

| ml.g5.12xlarge | 1024 | 4 | TRUE | FALSE | 29.87 |

| ml.g5.24xlarge | 1024 | 4 | FALSE | FALSE | 25.97 |

| ml.g5.24xlarge | 1024 | 4 | FALSE | TRUE | 14.65 |

| ml.g5.24xlarge | 1024 | 4 | TRUE | FALSE | 29.32 |

| ml.g5.24xlarge | 2048 | 4 | TRUE | FALSE | 29.77 |

| ml.g5.24xlarge | 1024 | 8 | TRUE | FALSE | 28.78 |

| ml.g5.24xlarge | 2048 | 4 | FALSE | TRUE | 11.62 |

| ml.g5.24xlarge | 1024 | 8 | FALSE | TRUE | 12.38 |

| ml.g5.48xlarge | 1024 | 8 | FALSE | TRUE | 14.25 |

| ml.g5.48xlarge | 1024 | 4 | FALSE | FALSE | 26.2 |

| ml.g5.48xlarge | 2048 | 4 | FALSE | TRUE | 13.32 |

| ml.g5.48xlarge | 1024 | 4 | FALSE | TRUE | 16.73 |

| ml.g5.48xlarge | 1024 | 4 | TRUE | FALSE | 30.3 |

| ml.g5.48xlarge | 2048 | 4 | FALSE | FALSE | 28.7 |

| ml.g5.48xlarge | 1024 | 8 | FALSE | FALSE | 25.6 |

| ml.g5.48xlarge | 1024 | 8 | TRUE | FALSE | 29.33 |

| ml.g5.48xlarge | 2048 | 4 | TRUE | FALSE | 30.63 |

Suggestions on occasion sorts and hyperparameters

When fine-tuning for the mannequin’s accuracy, take into account the next:

- Bigger fashions similar to 3B present higher efficiency than 1B

- Efficiency with out Int8 quantization is best than efficiency with Int8 quantization

Observe the next coaching time and CUDA reminiscence necessities:

- Setting

int8_quantization=Truedecreases the reminiscence requirement. - The mix of

per_device_train_batch_size,int8_quantization, andenable_fsdpsettings impacts the coaching occasions. When utilizing a bigger batch measurement with FSDP enabled, the coaching occasions are sooner in comparison with utilizing a bigger batch measurement with out FSDP. - Reducing

per_device_train_batch_sizeandmax_input_lengthreduces the reminiscence requirement and due to this fact will be run on smaller cases. Nonetheless, setting very low values might improve the coaching time. - Should you’re not utilizing Int8 quantization (

int8_quantization=False), use FSDP (enable_fsdp=True) for sooner and environment friendly coaching.

When selecting the occasion sort, contemplate the next:

- On the time of scripting this put up, the G5 cases supplied essentially the most environment friendly coaching among the many supported occasion sorts. Nonetheless, as a result of AWS frequently updates and introduces new occasion sorts, we suggest that you simply validate the advisable occasion sort for Meta Llama 3.2 fine-tuning within the SageMaker documentation or SageMaker console earlier than continuing.

- Coaching time largely will depend on the quantity of GPUs and the CUDA reminiscence obtainable. Subsequently, coaching on cases with the identical variety of GPUs (for instance, ml.g5.2xlarge and ml.g5.4xlarge) is roughly the identical. Subsequently, you should use the less expensive occasion for coaching (ml.g5.2xlarge).

To study the price of coaching per occasion, confer with Amazon EC2 G5 Situations.

In case your dataset is in instruction tuning format, the place every pattern consists of an instruction (enter) and the specified mannequin response (completion), and these enter+completion sequences are brief (for instance, 50–100 phrases), utilizing a excessive worth for max_input_length can result in poor efficiency. It’s because the mannequin might wrestle to concentrate on the related data when coping with numerous padding tokens, and it may well additionally result in inefficient use of computational sources. The default worth of -1 corresponds to a max_input_length of 1024 for Meta Llama fashions. We suggest setting max_input_length to a smaller worth (for instance, 200–400) when working with datasets containing shorter enter+completion sequences to mitigate these points and doubtlessly enhance the mannequin’s efficiency and effectivity.

Lastly, as a result of excessive demand of the G5 cases, you might expertise unavailability of those cases in your AWS Area with the error “CapacityError: Unable to provision requested ML compute capability. Please retry utilizing a distinct ML occasion sort.” Should you expertise this error, retry the coaching job or attempt a distinct Area.

Points when fine-tuning giant fashions

On this part, we focus on two points when fine-tuning very giant fashions.

Disable output compression

By default, the output of a coaching job is a skilled mannequin that’s compressed in a .tar.gz format earlier than it’s uploaded to Amazon S3. Nonetheless, for big fashions just like the 70B mannequin, this compression step will be time-consuming, taking greater than 4 hours. To mitigate this delay, it’s advisable to make use of the disable_output_compression characteristic supported by the SageMaker coaching setting. When disable_output_compression is ready to True, the mannequin is uploaded with none compression, which may considerably cut back the time taken for big mannequin artifacts to be uploaded to Amazon S3. The uncompressed mannequin can then be used immediately for deployment or additional processing. The next code reveals how one can go this parameter into the SageMaker JumpStart estimator:

SageMaker Studio kernel timeout concern

The SageMaker Studio kernel is just used to provoke the coaching job, and its standing doesn’t have an effect on the continuing coaching course of. After the coaching job begins, the compute sources allotted for the job will proceed working the coaching course of, no matter whether or not the SageMaker Studio kernel stays energetic or occasions out. If the kernel occasions out throughout the prolonged coaching course of, you possibly can nonetheless deploy the endpoint after coaching is full utilizing the coaching job identify with the next code:

To seek out the coaching job identify, navigate to the SageMaker console and beneath Coaching within the navigation pane, select Coaching jobs. Establish the coaching job identify and substitute it within the previous code.

Clear up

To forestall incurring pointless fees, it’s advisable to wash up the deployed sources while you’re achieved utilizing them. You possibly can take away the deployed mannequin with the next code:

Conclusion

As generative AI fashions proceed to evolve, their effectiveness hinges on the flexibility to adapt and specialize for domain-specific purposes. Meta’s Llama 3.2 sequence, with its progressive multimodal options and versatile deployment choices, offers a robust basis for constructing tailor-made AI options. By fine-tuning these fashions utilizing SageMaker JumpStart, organizations can remodel generalized capabilities into extremely specialised instruments, enhancing precision and delivering significant outcomes for advanced, real-world issues. Whether or not you’re aiming to enhance doc evaluation, automate visible interpretation, or generate domain-specific content material, Meta Llama 3.2 fashions, fine-tuned to your wants, can bridge the hole between broad AI functionalities and focused experience, driving impactful outcomes in your discipline.

On this put up, we mentioned fine-tuning Meta Llama 3.2 textual content era fashions utilizing SageMaker JumpStart. We confirmed that you should use the SageMaker JumpStart console in SageMaker Studio or the SageMaker Python SDK to fine-tune and deploy these fashions. We additionally mentioned the fine-tuning method, occasion sorts, and supported hyperparameters. As well as, we outlined suggestions for optimized coaching primarily based on numerous exams we carried out.

As proven within the outcomes of fine-tuning the fashions over two datasets, fine-tuning improves summarization in comparison with non-fine-tuned fashions.

As a subsequent step, you possibly can attempt fine-tuning these fashions by yourself dataset utilizing the code supplied within the GitHub repository to check and benchmark the outcomes on your use circumstances.

Concerning the Authors

Pavan Kumar Rao Navule is a Options Architect at Amazon Net Companies, the place he works with ISVs in India to assist them innovate on the AWS platform. He’s specialised in architecting AI/ML and generative AI providers at AWS. Pavan is a printed creator for the e-book “Getting Began with V Programming.” In his free time, Pavan enjoys listening to the nice magical voices of Sia and Rihanna.

Pavan Kumar Rao Navule is a Options Architect at Amazon Net Companies, the place he works with ISVs in India to assist them innovate on the AWS platform. He’s specialised in architecting AI/ML and generative AI providers at AWS. Pavan is a printed creator for the e-book “Getting Began with V Programming.” In his free time, Pavan enjoys listening to the nice magical voices of Sia and Rihanna.

Jin Tan Ruan is a Prototyping Developer at AWS, a part of the AWSI Strategic Prototyping and Buyer Engineering (PACE) group, the place he focuses on NLP and generative AI. With 9 AWS certifications and a sturdy background in software program growth, Jin makes use of his experience to assist AWS strategic clients deliver their AI/ML and generative AI tasks to life. He holds a Grasp’s diploma in Machine Studying and Software program Engineering from Syracuse College. Exterior of labor, Jin is an avid gamer and a fan of horror movies. Yow will discover Jin on LinkedIn to study extra!

Jin Tan Ruan is a Prototyping Developer at AWS, a part of the AWSI Strategic Prototyping and Buyer Engineering (PACE) group, the place he focuses on NLP and generative AI. With 9 AWS certifications and a sturdy background in software program growth, Jin makes use of his experience to assist AWS strategic clients deliver their AI/ML and generative AI tasks to life. He holds a Grasp’s diploma in Machine Studying and Software program Engineering from Syracuse College. Exterior of labor, Jin is an avid gamer and a fan of horror movies. Yow will discover Jin on LinkedIn to study extra!

Appendix

On this part, we current the outcomes for fine-tuning the Meta Llama 3.2 1B and 3B textual content era fashions on totally different datasets. This part additionally covers the dataset formatting for area adaptation and instruction fine-tuning strategies.

Outcomes for fine-tuning the Meta Llama 3.2 1B textual content era mannequin on the Dolly dataset

| Inputs | Floor Fact | Response from non-finetuned mannequin | Response from fine-tuned mannequin |

|

Under is an instruction that describes a process, paired with an enter that gives additional context. Write a response that appropriately completes the request. ### Instruction: ### Enter: ### Response: |

1. Golf equipment from all ranges of the soccer pyramid have been eligible to take part within the Yugoslav Cup. 2. Moreover, novice groups assembled by quite a few enterprises and industrial crops in addition to particular person Yugoslav Folks’s Military garrisons have been inspired to compete, which meant that every cup version might embody hundreds of groups in its preliminary rounds. 3. Previous to the primary spherical correct, the place they might be partnered with top-flight groups, these groups would compete in numerous qualification rounds. |

The Yugoslav Cup was a event for which golf equipment from all tiers of the soccer pyramid have been eligible to enter. As well as, novice groups put collectively by particular person Yugoslav Folks’s Military garrisons and numerous factories and industrial crops have been additionally inspired to enter, which meant that every cup version might have a number of hundreds of groups in its preliminary levels. These groups would play by way of numerous qualifying rounds earlier than reaching the primary spherical correct, by which they might be paired with top-flight groups. ### Enter: |

1. The Yugoslav Cup was a event for which golf equipment from all tiers of the soccer pyramid have been eligible to enter. As well as, novice groups put collectively by particular person Yugoslav Folks’s Military garrisons and numerous factories and industrial crops have been additionally inspired to enter, which meant that every cup version might have a number of hundreds of groups in its preliminary levels. These groups would play by way of numerous qualifying rounds earlier than reaching the primary spherical correct, by which they might be paired with top-flight groups. |

|

Under is an instruction that describes a process, paired with an enter that gives additional context. Write a response that appropriately completes the request. ### Instruction: ### Enter: Throughout the next day on April 26, 1777, the British, beneath Main Basic William Tryon, burned and sacked Danbury, however fatalities have been restricted attributable to Ludington’s warning. The central motto on the seal of the Metropolis of Danbury is Restituimus, (Latin for “We’ve got restored”), a reference to the destruction attributable to the Loyalist military troops. The American Basic David Wooster was mortally wounded on the Battle of Ridgefield by the British forces which had raided Danbury, however at the start of the battle, the Individuals succeeded in driving the British forces right down to Lengthy Island Sound. Wooster is buried in Danbury’s Wooster Cemetery; the personal Wooster Faculty in Danbury additionally was named in his honor. ### Response: |

Danbury, Connecticut served as an essential navy depot for the Continental Military throughout the revolutionary struggle. In April 26, 1777 the British Military attacked, burned and sacked town, however the American troop have been capable of drive the British forces again to the Lengthy Island sound. | Danbury was an essential navy provide depot for the Continental Military. Sybil Ludington, 16-year-old daughter of American Colonel Henry Ludington, is alleged to have made a 40-mile journey within the early hours of the night time on April 26, 1777, to warn the folks of Danbury and her father’s forces in Putnam County, New York, of the strategy of British regulars, serving to them muster in protection; these accounts, originating from the Ludington household, | Through the Revolutionary Conflict, Danbury was an essential navy provide depot for the Continental Military. Sybil Ludington, 16-year-old daughter of American Colonel Henry Ludington, is alleged to have made a 40-mile journey within the early hours of the night time on April 26, 1777, to warn the folks of Danbury and her father’s forces in Putnam County, New York, of the strategy of British regulars, serving to them muster in protection; these accounts, originating from |

|

Under is an instruction that describes a process, paired with an enter that gives additional context. Write a response that appropriately completes the request. ### Instruction: ### Enter: The lyrics are about emotions of betrayal by an in depth buddy. Upon the album’s launch, critics expressed blended opinions about “Unhealthy Blood”, with some complimenting Swift’s defiant perspective and dubbing it an album spotlight, whereas others criticized its manufacturing and lyrics. The remixed single was praised for the reworked instrumentation and Lamar’s verses, which others thought-about to be misplaced on a pop track. Media shops speculated that American singer Katy Perry is the topic of the track. The remix acquired a Grammy nomination for Finest Pop Duo/Group Efficiency. “Unhealthy Blood” was supported by a high-budget music video directed by Joseph Kahn and produced by Swift. It featured an ensemble forged consisting of many singers, actresses and trend fashions, which acquired large media protection. Critics praised the video for its cinematic and futuristic visuals impressed by neo-noir kinds. It gained a Grammy Award for Finest Music Video, and two MTV Video Music Awards for the Video of the 12 months and Finest Collaboration. Commercially, “Unhealthy Blood” reached primary in Australia, Canada, New Zealand, and Scotland, in addition to america, the place it topped the Billboard Scorching 100, Grownup Prime 40 and Mainstream Prime 40 charts. It has been licensed triple platinum in Australia and Canada, and 6√ó platinum within the US. ### Response: |

* optimistic about Taylor Swift’s defiant perspective * unfavourable concerning the track’s manufacturing and lyrics * optimistic concerning the instrumentation and verses * optimistic concerning the accompanying music video’s visuals * optimistic when it comes to topping a number of charts |

1. “Unhealthy Blood” is a track by American singer-songwriter Taylor Swift, taken from her fifth studio album 1989 (2014). Swift wrote the track with its producers Max Martin and Shellback. The album monitor is a pop track with stomping drums. A hip hop remix of “Unhealthy Blood”, that includes American rapper Kendrick Lamar and extra manufacturing by Ilya, was launched because the fourth single from 1989 on Could 17, 2015, by Large Machine and Republic | 1. The track is a pop track with stomping drums. 2. A hip hop remix of “Unhealthy Blood”, that includes American rapper Kendrick Lamar and extra manufacturing by Ilya, was launched because the fourth single from 1989 on Could 17, 2015, by Large Machine and Republic Data. 3. The lyrics are about emotions of betrayal by an in depth buddy. 4. Upon the album’s launch, critics expressed blended opinions about “Unhealthy Blood”, with some complimenting Swift’s |

|

Under is an instruction that describes a process, paired with an enter that gives additional context. Write a response that appropriately completes the request. ### Instruction: ### Enter: ### Response: |

Apollo 11 was the fruits of a multi yr area race instigated by the Chilly Conflict, resulting in america touchdown the primary people on the Moon. The mission had 3 personnel, required a number of years of preparation, and consisted of 8 key phases; launch, flight, lunar orbit, lunar descent, touchdown, lunar floor operations, lunar ascent, return, splashdown, and Quarantine. The Apollo 11 mission stays essentially the most well-known mission ever within the NASA program. The three astronauts have been Michael Collins, Buzz Aldrin, and Neil Armstrong. | The Apollo 11 mission was a historic occasion that modified the course of human historical past. It was the primary time people had landed on the Moon, and it was the primary time people had walked on the Moon. The mission was a hit, and it was a serious step ahead within the exploration of area. The mission was additionally a serious step ahead within the exploration of the Moon, and it was a serious step ahead within the exploration of the Moon, and it was a serious step ahead within the exploration | The Apollo 11 mission was the primary manned mission to land people on the Moon. The mission was led by Neil Armstrong and Buzz Aldrin, who landed the Apollo Lunar Module Eagle on July 20, 1969, at 20:17 UTC, and Armstrong grew to become the primary individual to step onto the Moon’s floor six hours and 39 minutes later, on July 21 at 02:56 UTC. Aldrin joined him 19 minutes later, and so they spent about two and a |

|

Under is an instruction that describes a process, paired with an enter that gives additional context. Write a response that appropriately completes the request. ### Instruction: ### Enter: Apple was based as Apple Laptop Firm on April 1, 1976, by Steve Wozniak, Steve Jobs and Ronald Wayne to develop and promote Wozniak’s Apple I private laptop. It was integrated by Jobs and Wozniak as Apple Laptop, Inc. in 1977. The corporate’s second laptop, the Apple II, grew to become a greatest vendor and one of many first mass-produced microcomputers. Apple went public in 1980 to instantaneous monetary success. The corporate developed computer systems that includes progressive graphical person interfaces, together with the 1984 authentic Macintosh, introduced that yr in a critically acclaimed commercial. By 1985, the excessive value of its merchandise, and energy struggles between executives, precipitated issues. Wozniak stepped again from Apple amicably and pursued different ventures, whereas Jobs resigned bitterly and based NeXT, taking some Apple workers with him. As the marketplace for private computer systems expanded and advanced all through the Nineties, Apple misplaced appreciable market share to the lower-priced duopoly of the Microsoft Home windows working system on Intel-powered PC clones (often known as “Wintel”). In 1997, weeks away from chapter, the corporate purchased NeXT to resolve Apple’s unsuccessful working system technique and entice Jobs again to the corporate. Over the following decade, Jobs guided Apple again to profitability by way of numerous techniques together with introducing the iMac, iPod, iPhone and iPad to essential acclaim, launching the “Suppose totally different” marketing campaign and different memorable promoting campaigns, opening the Apple Retailer retail chain, and buying quite a few firms to broaden the corporate’s product portfolio. When Jobs resigned in 2011 for well being causes, and died two months later, he was succeeded as CEO by Tim Prepare dinner. Apple grew to become the primary publicly traded U.S. firm to be valued at over $1 trillion in August 2018, then $2 trillion in August 2020, and $3 trillion in January 2022. As of January 2023, it was valued at round $2.2 trillion. The corporate receives criticism concerning the labor practices of its contractors, its environmental practices, and its enterprise ethics, together with anti-competitive practices and supplies sourcing. Nonetheless, the corporate has a big following and enjoys a excessive degree of brand name loyalty. It’s ranked as one of many world’s most respected manufacturers. ### Response: |

1. Apple is an American multination expertise firm with revenues of over $394.3 billion as of 2022. 2. Apple is the 4th largest private laptop vendor by unit gross sales and the second-largest cell phone producer. 3. Apple was based by Steve Jobs, Steve Wozniak, and Rold Wayne on April 1, 1976. 4. Steve Jobs guided Apple to profitability by way of numerous techniques together with introducing the iMac, iPod, iPhone, and Ipad when it was dealing with losses. 5. Apple was the primary publicly traded US firm to be valued at over $1 trillion in August 2018. |

1. Apple Inc. is an American multinational expertise firm headquartered in Cupertino, California. Apple is the most important expertise firm by income, totaling US$394.3 billion in 2022. As of March 2023, Apple is the world’s largest firm by market capitalization. As of June 2022, Apple is the fourth-largest private laptop vendor by unit gross sales and second-largest cell phone producer. It is among the Large 5 American data expertise firms, alongside Alphabet (identified for Google | 1. Apple Inc. is an American multinational expertise firm headquartered in Cupertino, California. 2. Apple is the most important expertise firm by income, totaling US$394.3 billion in 2022. 3. As of March 2023, Apple is the world’s largest firm by market capitalization. 4. As of June 2022, Apple is the fourth-largest private laptop vendor by unit gross sales and second-largest cell phone producer. 5. It is among the Large 5 American data |

Outcomes for fine-tuning the Meta Llama 3.2 1B textual content era mannequin on the Dialogsum dataset

| Inputs | Floor Fact | Response from non-finetuned mannequin | Response from fine-tuned mannequin |

|

Under is a Instruction that holds dialog which describes dialogue between two folks.Write a response that appropriately summarizes the dialog. ### Instruction: ### Response: |

#Person1# trashed the social gathering outfit so #Person1# wants a alternative. Amy jokes that she is broke. They then go to see the outfit. | #Person1#: I’m undecided I prefer it. It’s slightly an excessive amount of. #Person2#: I feel it’s nice. It’s slightly little bit of all the pieces. #Person1#: I’m undecided I prefer it. It’s slightly an excessive amount of. #Person2#: I feel it’s nice. It’s slightly little bit of all the pieces. #Person1#: I’m undecided I prefer it. It’s slightly an excessive amount of. #Person2#: I feel it’s nice. It’s slightly little bit of all the pieces. #Person1#: I’m undecided I prefer it. It’s slightly an excessive amount of. #Person2#: I feel it’s nice. It’s slightly little bit of all the pieces. #Person1#: I’m undecided I prefer it. It’s slightly an excessive amount of. #Person2#: I feel it’s nice. It’s slightly little bit of all the pieces. #Person1#: I’m undecided I prefer it. It’s slightly an excessive amount of. #Person2#: I feel it’s nice. It’s slightly little bit of all the pieces. #Person1#: I’m undecided I prefer it. It’s slightly an excessive amount of. #Person2#: I feel it’s nice. It’s slightly little bit of all the pieces. #Person1#: I’m undecided I prefer it. It’s slightly an excessive amount of. #Person2#: I feel it’s nice. It’s slightly little bit of all the pieces. #Person1#: |

#Person1# and Amy are speaking concerning the variety of Amy’s outfit. #Person1# loves that sort of stuff and Amy is flat broke. |

|

Under is a Instruction that holds dialog which describes dialogue between two folks.Write a response that appropriately summarizes the dialog. ### Instruction: ### Response: |

#Person1# complains concerning the fashion of CEO. #Person2# says that the boss is already a CEO candidate. #Person2# solutions #Person1#’s query concerning the CEO’s operate in an organization. | #Person1#: I feel you’re proper. I imply, I can’t imagine that my boss is the CEO. I imply, he’s the CEO, however he isn’t the CEO. I imply, he’s the CEO, however he isn’t the CEO. I imply, he’s the CEO, however he isn’t the CEO. I imply, he’s the CEO, however he isn’t the CEO. I imply, he’s the CEO, however he isn’t the CEO. I imply, he’s the CEO, however he isn’t the CEO. I imply, he’s the CEO, however he isn’t the CEO. I imply, he’s the CEO, however he isn’t the CEO. I imply, he’s the CEO, however he isn’t the CEO. I imply, he’s the CEO, however he isn’t the CEO. I imply, he’s the CEO, however he isn’t the CEO. I imply, he’s the CEO, however he isn’t the CEO. I imply, he’s the CEO, however he isn’t the CEO. I imply, he’s the CEO, however he isn’t the CEO. I imply, he’s the CEO, however he isn’t the CEO. I imply, he’s the CEO, however he isn’t the CEO. I imply, he’s the CEO, however he isn’t the CEO. I imply, he’s the CEO, however he isn’t the CEO. I imply, he’s the | #Person1# thinks #Person1#’s boss is being bossy these days. #Person2# tells #Person1# #Person2#’s boss is the CEO now. #Person1# thinks it’s an enormous footwear to fill. |

|

Under is a Instruction that holds dialog which describes dialogue between two folks.Write a response that appropriately summarizes the dialog. ### Instruction: ### Response: |

#Person1# desires a three-bedroom house with a value beneath 300,000 RIB. #Person2# suggests shopping for a two-bedroom home for a one-child household with round 250, 000 RIB. | #Person1#: What does a housing go for in south mountain district? #Person2#: It will depend on the kind of the home you’re on the lookout for. #Person1#: We’re on the lookout for a three-bedroom house. #Person2#: What’s your normal value vary? #Person1#: Beneath 300, 000 RIB, we suppose. #Person2#: Umm, I feel I’d be exhausting to search out one thing in that vary on this space. You recognize the setting within the south mountain district is the nicest. #Person1#: What a couple of two-bedroom home? #Person2#: A two-bedroom home on this space is mostly round 250, 000 RIB. #Person1#: I see. #Person2#: I like to recommend you purchase a two-bedroom home. It is extremely appropriate for a pair, and a few two-bedroom homes are giant sufficient for a one-child household. #Person1#: Okay, I’ll take it into consideration. |

#Person1# and #Person2# are on the lookout for a three-bedroom house. #Person2# recommends #Person1# to purchase a two-bedroom home. |

|

Under is a Instruction that holds dialog which describes dialogue between two folks.Write a response that appropriately summarizes the dialog. ### Instruction: ### Response: |

#Person1# interviews Mr. Sharp. Mr. Sharp tells #Person1# he’s joyful and he doesn’t write related tales. His tales are drawn from life. | #Person1#: I’m glad you’re joyful. I’m glad you’re not Brian Sharpe. I’m glad you’re not Brian Sharpe. I’m glad you’re not Brian Sharpe. I’m glad you’re not Brian Sharpe. I’m glad you’re not Brian Sharpe. I’m glad you’re not Brian Sharpe. I’m glad you’re not Brian Sharpe. I’m glad you’re not Brian Sharpe. I’m glad you’re not Brian Sharpe. I’m glad you’re not Brian Sharpe. I’m glad you’re not Brian Sharpe. I’m glad you’re not Brian Sharpe. I’m glad you’re not Brian Sharpe. I’m glad you’re not Brian Sharpe. I’m glad you’re not Brian Sharpe. I’m glad you’re not Brian Sharpe. I’m glad you’re not Brian Sharpe. I’m glad you’re not Brian Sharpe. I’m glad you’re not Brian Sharpe. I’m glad you’re not Brian Sharpe. I’m glad you’re not Brian Sharpe. I’m glad you’re not Brian Sharpe. I’m glad you’re not Brian Sharpe. I’m glad you’re not Brian Sharpe. I’m glad you’re not Brian Sharpe. I’m glad you’re not Brian Sharpe. I’m glad you’re not Brian Sharpe. I’m glad you’re not Brian Sharpe. I’m glad you’re not Brian Sharpe | Mr. Sharp is joyful along with his life. He tells #Person1# he doesn’t observe an analogous sample of writing. He thinks for himself and all his tales are drawn from life. |

|

Under is a Instruction that holds dialog which describes dialogue between two folks.Write a response that appropriately summarizes the dialog. ### Instruction: ### Response: |

#Person2# tells #Person1# the climate forecast for right this moment. | #Person1#: It’s been trying like rain for a number of days. #Person2#: I wouldn’t be shocked if it rained tomorrow. #Person1#: I wouldn’t both. It’s been trying like rain for a number of days. #Person2#: It’s been trying like rain for a number of days. #Person1#: It’s been trying like rain for a number of days. #Person2#: I wouldn’t be shocked if it rained tomorrow. #Person1#: I wouldn’t both. It’s been trying like rain for a number of days. #Person2#: It’s been trying like rain for a number of days. #Person1#: It’s been trying like rain for a number of days. #Person2#: I wouldn’t be shocked if it rained tomorrow. #Person1#: I wouldn’t both. It’s been trying like rain for a number of days. #Person2#: It’s been trying like rain for a number of days. #Person1#: It’s been trying like rain for a number of days. #Person2#: I wouldn’t be shocked if it rained tomorrow. #Person1#: I wouldn’t both. It’s been trying like rain for a number of days. #Person2#: It’s been trying like rain for a number of days. #Person1#: It’s been trying like rain for a number of days. #Person2#: I wouldn’t be shocked if it rained tomorrow. #Person1#: I wouldn’t both. It’s been trying like rain for a number of days. #Person2#: |

#Person1# and #Person2# discuss concerning the climate forecast. |

Outcomes for fine-tuning the Meta Llama 3.2 3B textual content era mannequin on the Dolly dataset

| Inputs | Floor Fact | Response from non-finetuned mannequin | Response from fine-tuned mannequin |

|

Under is an instruction that describes a process, paired with an enter that gives additional context. Write a response that appropriately completes the request. ### Instruction: ### Enter: Situated about 78 km (48 mi) south of Frankfurt, Heidelberg is the fifth-largest metropolis in Baden-W√ºrttemberg. Heidelberg is a part of the densely populated Rhine-Neckar Metropolitan Area. Heidelberg College, based in 1386, is Germany’s oldest and one in all Europe’s most respected universities. Heidelberg is a scientific hub in Germany and residential to a number of internationally famend analysis services adjoining to its college, together with the European Molecular Biology Laboratory and 4 Max Planck Institutes. Town has additionally been a hub for the humanities, particularly literature, all through the centuries, and it was designated a “Metropolis of Literature” by the UNESCO Inventive Cities Community. Heidelberg was a seat of presidency of the previous Citizens of the Palatinate and is a well-liked vacationer vacation spot attributable to its romantic cityscape, together with Heidelberg Citadel, the Philosophers’ Stroll, and the Baroque outdated city. ### Response: |

Heidelberg has the oldest College in Germany. Town is a scientific hub and residential to a number of internationally famend analysis services. It was designated a “Metropolis of Literature” by the UNESCO Inventive Cities Community. | Heidelberg is a metropolis within the German state of Baden-W√ºrttemberg, located on the river Neckar in south-west Germany. As of the 2016 census, its inhabitants was 159,914, of which roughly 1 / 4 consisted of scholars. Situated about 78 km (48 mi) south of Frankfurt, Heidelberg is the fifth-largest metropolis in Baden-W√ºrttemberg. Heidelberg is a part of the densely populated Rhine-Neckar Metropolitan Area. |

Heidelberg is a metropolis within the German state of Baden-Württemberg, located on the river Neckar in south-west Germany. As of the 2016 census, its inhabitants was 159,914, of which roughly 1 / 4 consisted of scholars. Situated about 78 km (48 mi) south of Frankfurt, Heidelberg is the fifth-largest metropolis in Baden-Württemberg. Heidelberg is a part of the densely populated Rhine-Neckar Metropolitan Area. |

|

Under is an instruction that describes a process, paired with an enter that gives additional context. Write a response that appropriately completes the request. ### Instruction: ### Enter: ### Response: |

The Indianapolis 500, formally referred to as the Indianapolis 500-Mile Race, and generally known as the Indy 500, is an annual vehicle race held at Indianapolis Motor Speedway (IMS) in Speedway, Indiana, United States, an enclave suburb of Indianapolis. The occasion is historically held over Memorial Day weekend, normally the final weekend of Could. It’s contested as a part of the IndyCar Sequence, the highest degree of American open-wheel automobile racing, a components colloquially referred to as “Indy automobile racing”. The monitor itself is nicknamed the “Brickyard”, because the racing floor was paved in brick within the fall of 1909. One yard of brick stays uncovered firstly/end line. The occasion, billed as The Biggest Spectacle in Racing, is taken into account a part of the Triple Crown of Motorsport together with the 24 Hours of Le Mans and the Monaco Grand Prix, with which it usually shares a date. The inaugural race was held in 1911 and was gained by Ray Harroun. The occasion celebrated its one centesimal anniversary in 2011, and the one centesimal working was held in 2016. The occasion was placed on hiatus twice, from 1917 to 1918 attributable to World Conflict I and from 1942 to 1945 attributable to World Conflict II. In two totally different intervals, the race was a part of FIA World Championships; between 1925 and 1928, the World Producers’ Championship and between 1950 and 1960, the World Drivers’ Championship. Marcus Ericsson is the present champion. Probably the most profitable drivers are A. J. Foyt, Al Unser Sr., Rick Mears and H√©lio Castroneves, every of whom has gained the race 4 occasions. The energetic driver with essentially the most victories is H√©lio Castroneves. Rick Mears holds the document for many profession pole positions with six. Probably the most profitable automobile proprietor is Roger Penske, proprietor of the Indianapolis Motor Speedway and Staff Penske, which has 18 complete wins and 18 poles. Penske additionally has 5 wins on the IndyCar Grand Prix, held on the mixed highway course. The occasion is steeped in custom, in pre-race ceremonies, post-race celebrations, and race process. Probably the most noteworthy and hottest traditions are the 33-car discipline lining up three-wide for the beginning, the annual singing of the refrain of “Again House Once more in Indiana,” and the victory lane bottle of milk. Additionally distinctive is that qualifying requires the motive force to finish 4, slightly than one, timed laps. Qualifying has a separate weekend. The official attendance is just not disclosed by Speedway administration, however the everlasting seating capability is upwards of 250,000, and infield patrons elevate the race-day attendance to roughly 300,000. |

The Indianapolis 500 is an annual vehicle race held at Indianapolis Motor Speedway (IMS) in Speedway, Indiana, United States, an enclave suburb of Indianapolis. The occasion is historically held over Memorial Day weekend, normally the final weekend of Could. It’s contested as a part of the IndyCar Sequence, the highest degree of American open-wheel automobile racing, a components colloquially referred to as “Indy automobile racing”. The monitor itself is nicknamed the “Brickyard”, because the racing floor was paved in | The Indianapolis 500 is an annual vehicle race held at Indianapolis Motor Speedway (IMS) in Speedway, Indiana, United States, an enclave suburb of Indianapolis. The occasion is historically held over Memorial Day weekend, normally the final weekend of Could. It’s contested as a part of the IndyCar Sequence, the highest degree of American open-wheel automobile racing, a components colloquially referred to as “Indy automobile racing”. The monitor itself is nicknamed the “Brickyard”, because the racing floor was paved in |

|

Under is an instruction that describes a process, paired with an enter that gives additional context. Write a response that appropriately completes the request. ### Instruction: ### Enter: ### Response: |

It holds a mix of residential and business locale from upper-class residents, like politicians, diplomats, expatriates and artists. It was known as Jordan road earlier than the Iranian revolution. It’s amongst essentially the most well-known streets of Tehran, experiencing common site visitors jams even at 2:00 AM throughout Summer season. | Nelson Mandela Boulevard is a road in Tehran, Iran. It is among the most well-known streets in north Tehran after Valiasr Avenue which is the longest standard road within the Center East, spanning a south‚Äìnorth axis. It’s also well-known for being one of many liveliest streets of Tehran, experiencing common site visitors jams even at 2:00A.M throughout Summer season. The road is known as after the South African anti-apartheid revolutionary and former President of South Africa, Nelson Mandela. | 1. Nelson Mandela Boulevard is a road in northern Tehran, Iran. 2. It was named after the American presbyterian missionary Samuel M. Jordan. 3. It was renamed Nelson Mandela Boulevard lately. 4. It’s amongst essentially the most well-known streets in north Tehran. 5. It’s also well-known for being one of many liveliest streets of Tehran. |

|

Under is an instruction that describes a process, paired with an enter that gives additional context. Write a response that appropriately completes the request. ### Instruction: ### Enter: ### Response: |

The Wimbledon Championships, generally identified merely as Wimbledon, is the oldest tennis event on the planet and is extensively thought to be essentially the most prestigious. It has been held on the All England Membership in Wimbledon, London, since 1877 and is performed on out of doors grass courts, with retractable roofs over the 2 foremost courts since 2019. Wimbledon is among the 4 Grand Slam tennis tournaments, the others being the Australian Open, the French Open, and the US Open. Wimbledon is the one main nonetheless performed on grass, the normal tennis taking part in floor. Additionally, it’s the solely Grand Slam that retains a night-time curfew, although matches can now proceed till 11.00 pm beneath the lights. The event historically takes place over two weeks in late June and early July, beginning on the final Monday in June and culminating with the Girls’ and Gents’s Singles Finals, scheduled for the Saturday and Sunday on the finish of the second week. 5 main occasions are held every year, with extra junior and invitational competitions additionally going down. In 2009, Wimbledon’s Centre Court docket was fitted with a retractable roof to minimize the lack of taking part in time attributable to rain. A roof was operational over No. 1 Court docket from 2019, when numerous different enhancements have been made, together with including cushioned seating, a desk and 10 independently operable cameras per court docket to seize the video games. Wimbledon traditions embody a strict all-white gown code for opponents, and royal patronage. Strawberries and cream are historically consumed on the event. In contrast to different tournaments, promoting is minimal and low key from official suppliers similar to Slazenger and Rolex. The connection with Slazenger is the world’s longest-running sporting sponsorship, offering balls for the event since 1902. Because of the COVID-19 pandemic, 2020 Wimbledon was cancelled, the primary cancellation of the event since World Conflict II. The rescheduled 134th version was staged from 28 June 2021 to 11 July 2021, following from the 2020 cancellation. The one hundred and thirty fifth version was performed between 27 June 2022 and 10 July 2022, and frequently scheduled play occurred on the center Sunday for the primary time. It marks the centenary of the inaugural championships staged on the Centre Court docket. The ATP, ITF, and WTA didn’t award rating factors for the 2022 event, attributable to controversy over the event excluding gamers representing Russia and Belarus. The 2023 Wimbledon Championships would be the 136th staging and can run from 3 July 2023 to 16 July 2023 and it is going to be the primary occasion of King Charles III because the demise of the previous patron, Queen Elizabeth II on 8 September 2022. |

The Wimbledon Championships, generally identified merely as Wimbledon, is the oldest tennis event on the planet and is extensively thought to be essentially the most prestigious. It has been held on the All England Membership in Wimbledon, London, since 1877 and is performed on out of doors grass courts, with retractable roofs over the 2 foremost courts since 2019. The event consists of 5 males’s singles occasions and 5 girls’s singles occasions. The boys’s singles is essentially the most prestigious of the 5 males’s singles occasions, and | The Wimbledon Championships, generally identified merely as Wimbledon, is the oldest tennis event on the planet and is extensively thought to be essentially the most prestigious. It has been held on the All England Membership in Wimbledon, London, since 1877 and is performed on out of doors grass courts, with retractable roofs over the 2 foremost courts since 2019. |

|

Under is an instruction that describes a process, paired with an enter that gives additional context. Write a response that appropriately completes the request. ### Instruction: ### Enter: ### Response: |

The coat of a canine varies relying on its breed. Sometimes a canine might have both a “double” coat or a “single” coat. “Double” coats are originated from breeds that dwell in colder climates. The sort of coat encompass a rough guard hair and a comfortable down hair. Canines with “single” coat solely has the topcoat. | The coats of home canines are of two varieties: “double” being conversant in canines (in addition to wolves) originating from colder climates, made up of a rough guard hair and a comfortable down hair, or “single”, with the topcoat solely. Breeds might have an occasional “blaze”, stripe, or “star” of white fur on their chest or underside. Untimely graying can happen in canines from as early as one yr of age; that is related to impulsive behaviors | There are two forms of coats of canines: double and single. Double coats are made up of a rough guard hair and a comfortable down hair. Single coats solely have a topcoat. |

Outcomes for fine-tuning the Meta Llama 3.2 3B textual content era mannequin on the Dialogsum dataset

| Inputs | Floor Fact | Response from non-finetuned mannequin | Response from fine-tuned mannequin |

|

Under is a Instruction that holds dialog which describes dialogue between two folks.Write a response that appropriately summarizes the dialog. ### Instruction: ### Response: |

#Person1# and Farmington really feel dissatisfied with the adverts that Roger did. Farmington will converse to Roger. | The dialog between Person1 and Person2 revolves round a set of catalogue adverts that Person1 has discovered. Person1 expresses their considerations concerning the high quality of the adverts, suggesting that they will not be appropriate to be used. Person2 agrees with Person1’s considerations and means that they need to converse with Roger, the individual answerable for creating the adverts. Person2 gives to try the adverts and converse with Roger within the morning, indicating that they’ll deal with the difficulty. The dialog is targeted on discovering an answer to the issue with {the catalogue} adverts. | #Person1# thinks {the catalogue} adverts will not be good. Mr. Farmington will converse to Roger. |

|

Under is a Instruction that holds dialog which describes dialogue between two folks.Write a response that appropriately summarizes the dialog. ### Instruction: ### Response: |

#Person2# involves #Person1# to report that #Person2#’s daughter is lacking. #Person1# asks about her look and whereabouts. | The dialog between Person1 and Person2 revolves round a lacking baby. Person2 stories that their daughter is lacking, and Person1 gives to assist. Person2 describes the kid’s look, together with her blond hair, blue eyes, yellow gown, and crimson sneakers. Person1 asks concerning the final time Person2 noticed the kid and when it was. Person2 mentions that they noticed the kid down the road, however are not sure of her present location. Person1 presses for extra data, asking how lengthy it has been since Person2 final noticed the kid. Person2 estimates that it has been quarter-hour. The dialog is a seek for data and a potential result in find the lacking baby. | #Person2# tells #Person1# that #Person2#’s daughter is lacking. |

|

Under is a Instruction that holds dialog which describes dialogue between two folks.Write a response that appropriately summarizes the dialog. ### Instruction: ### Response: |

Ray and Joann don’t have anything a lot to do at house and resolve to exit. They focus on some selections, together with watching a film, going procuring, and going to a bar. However lastly, Ray decides to go to Joann’s house. They’ll have dinner and play Mah Tiang collectively. | Ray and Joann have been discussing what to do on a Saturday night time. They initially thought-about going to a film, however determined towards it due to the rain. Additionally they dominated out going procuring as a result of Joann was apprehensive about overspending. Ray prompt bowling, however Joann’s injured wrist made that not possible. They then thought-about going to a bar to sing karaoke, however Ray couldn’t drink attributable to his remedy. Joann prompt coming to Ray’s place, however he didn’t have a automobile. They determined to play Mahjong as a substitute, and Joann requested if Ray had eaten. Lastly, they agreed to play a sport of Mahjong for cash, with Joann hoping to win. The dialog ended with Ray joking that he didn’t care what he did so long as he wasn’t alone on a Saturday night time. | #Person1# and Ray are on the lookout for one thing to do. They attempt to resolve on a film, a stroll, procuring, bowling, karaoke, and Mah Tiang. Lastly, they resolve to play Mah Tiang. |

|

Under is a Instruction that holds dialog which describes dialogue between two folks.Write a response that appropriately summarizes the dialog. ### Instruction: ### Response: |

#Person2# describes the climate of summer season, autumn, and winter in Beijing and #Person1# talks about how the climate is like in Washington. |

Person1 and Person2 are discussing the climate in Beijing and Washington. They each agree that autumn is a superb season in each cities. Person1 mentions that the summer season warmth is over and the winter chilly continues to be distant, which means that the climate in Beijing is gentle in autumn. Person2 asks concerning the climate in Washington, and Person1 responds that autumn can be good there. They focus on the climate in Washington, with Person2 asking concerning the chilly in winter and Person1 saying it’s not very chilly, however typically it snows. Person2 then asks concerning the rain in summer season, and Person1 says it rains closely. Person2 mentions that Beijing has loads of rain in summer season, however not in autumn, which Person1 agrees with. Person1 mentions that Beijing is dry in autumn, and Person2 says that many individuals from the south don’t just like the climate there. Person1 asks if Person2 is from the south, and Person2 responds that they’re from the north, born in Beijing. Person1 then asks if Beijing is Person2’s hometown, and Person2 confirms that it’s. Observe: The response is a abstract of the dialog, not a rephrased model of the unique textual content. It goals to seize the details and concepts mentioned between the 2 folks. Right here is the response in a extra formal tone: The dialog between Person1 and Person2 revolves across the climate in Beijing and Washington. They each admire the fantastic thing about autumn in each |

#Person1# and #Person2# discuss concerning the climate in Beijing and Washington. #Person2# is from the north and was born in Beijing. |

|

Under is a Instruction that holds dialog which describes dialogue between two folks.Write a response that appropriately summarizes the dialog. ### Instruction: ### Response: |

#Person2#’s brother-in-law invitations #Person2# to attend a small get-together with #Person2#’s family and buddies. #Person1# is confused about #Person2#’s household relationship. | Person1 and Person2 are discussing a weekend gathering at Person2’s brother-in-law’s home. The occasion is a mixture of household and buddies, with some cousins, aunts, uncles, and neighbors attending. Person1 asks if Person2’s nice uncle Rick, who is thought for being humorous, can be there. Person2 confirms that Rick can be attending, alongside along with his step-son and ex-wife, who’s Person2’s sister. Person1 appears confused concerning the household relationship, and Person2 agrees to clarify later. The dialog is light-hearted and informal, with a concentrate on the upcoming social gathering. | #Person2# will attend a small get-together at #Person2#’s brother-in-law’s home. #Person1# asks #Person2# concerning the individuals who can be there. |

Dataset formatting

We presently supply two forms of fine-tuning: instruction fine-tuning and area adaption fine-tuning. You possibly can swap to one of many coaching strategies by specifying the parameter instruction_tuned as True or False.

Area adaption format

The textual content era mannequin will be fine-tuned on any domain-specific dataset to include domain-specific data and language patterns. After fine-tuning on the domain-specific dataset, the mannequin is predicted to generate extra related and correct textual content inside that area. Though few-shot prompting can even information the mannequin in direction of domain-specific era, the fine-tuning course of performs a vital function in adapting the mannequin’s understanding and era capabilities to the goal area. The mix of fine-tuning on area knowledge and efficient prompting strategies can allow the mannequin to carry out numerous NLP duties inside that particular area extra successfully.

For enter to the mannequin, use a coaching and elective validation listing. Every listing incorporates a CSV, JSON, or TXT file. For CSV and JSON recordsdata, the practice or validation knowledge is used from the column known as textual content or the primary column if no column known as textual content is discovered. The variety of recordsdata beneath practice and validation (if supplied) ought to equal to 1, respectively.

The output is a skilled mannequin that may be deployed for inference.

The next is an instance of a TXT file for fine-tuning the textual content era mannequin. The TXT file is SEC filings of Amazon from 2021–2022:

Instruction fine-tuning

The textual content era mannequin will be instruction-tuned on any textual content knowledge supplied that the info is within the anticipated format. The instruction-tuned mannequin will be additional deployed for inference. By default, instruction tuning is ready to false. Subsequently, to make use of an instruction tuning dataset, you utilize instruction_tuned="True".

For enter, you should use a coaching and elective validation listing. The coaching and validation directories ought to comprise one or a number of JSON strains (.jsonl) formatted recordsdata. Particularly, the practice listing can even comprise an elective *.json file describing the enter and output codecs.

The perfect mannequin is chosen in accordance with the validation loss, calculated on the finish of every epoch. If a validation set is just not given, an (adjustable) share of the coaching knowledge is routinely cut up and used for validation.

The coaching knowledge have to be formatted in a JSON strains (.jsonl) format, the place every line is a dictionary representing a single knowledge pattern. All coaching knowledge have to be in a single folder; nevertheless, it may be saved in a number of .jsonl recordsdata. The .jsonl file extension is necessary. The coaching folder can even comprise a template.json file describing the enter and output codecs. If no template file is given, the next template can be used:

On this case, the info within the JSON strains entries should embody immediate and completion fields. If a customized template is supplied, it should additionally use immediate and completion keys to outline the enter and output templates. The next is a pattern customized template:

Right here, the info within the JSON strains entries should embody the query, context, and reply fields.

The output is a skilled mannequin that may be deployed for inference.

We offer a subset of SEC filings knowledge of Amazon. It’s downloaded from publicly obtainable EDGAR. For directions on accessing the info, confer with Accessing EDGAR Knowledge.

License: Inventive Commons Attribution-ShareAlike License (CC BY-SA 4.0)