For roboticists, one problem towers above all others: generalization — the flexibility to create machines that may adapt to any surroundings or situation. Because the Nineteen Seventies, the sector has developed from writing refined packages to utilizing deep studying, educating robots to be taught straight from human habits. However a crucial bottleneck stays: knowledge high quality. To enhance, robots have to encounter eventualities that push the boundaries of their capabilities, working on the fringe of their mastery. This course of historically requires human oversight, with operators rigorously difficult robots to broaden their talents. As robots change into extra refined, this hands-on strategy hits a scaling downside: the demand for high-quality coaching knowledge far outpaces people’ means to offer it.

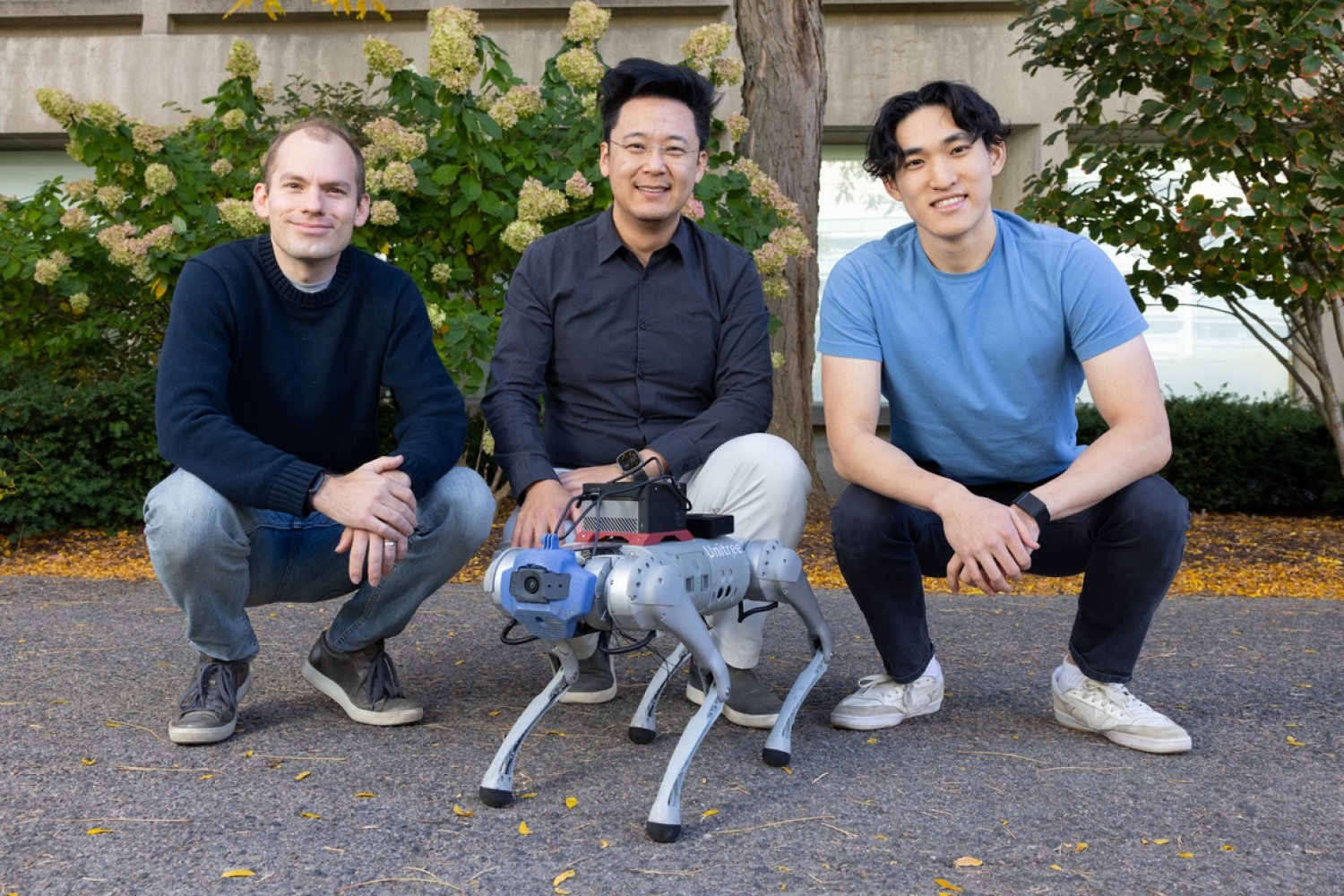

Now, a staff of MIT Pc Science and Synthetic Intelligence Laboratory (CSAIL) researchers has developed a novel strategy to robotic coaching that would considerably speed up the deployment of adaptable, clever machines in real-world environments. The brand new system, referred to as “LucidSim,” makes use of current advances in generative AI and physics simulators to create numerous and life like digital coaching environments, serving to robots obtain expert-level efficiency in tough duties with none real-world knowledge.

LucidSim combines physics simulation with generative AI fashions, addressing one of the persistent challenges in robotics: transferring expertise realized in simulation to the actual world. “A basic problem in robotic studying has lengthy been the ‘sim-to-real hole’ — the disparity between simulated coaching environments and the complicated, unpredictable actual world,” says MIT CSAIL postdoc Ge Yang, a lead researcher on LucidSim. “Earlier approaches typically relied on depth sensors, which simplified the issue however missed essential real-world complexities.”

The multipronged system is a mix of various applied sciences. At its core, LucidSim makes use of giant language fashions to generate varied structured descriptions of environments. These descriptions are then reworked into photographs utilizing generative fashions. To make sure that these photographs mirror real-world physics, an underlying physics simulator is used to information the era course of.

The start of an concept: From burritos to breakthroughs

The inspiration for LucidSim got here from an sudden place: a dialog outdoors Beantown Taqueria in Cambridge, Massachusetts. “We needed to show vision-equipped robots find out how to enhance utilizing human suggestions. However then, we realized we didn’t have a pure vision-based coverage to start with,” says Alan Yu, an undergraduate pupil in electrical engineering and pc science (EECS) at MIT and co-lead writer on LucidSim. “We saved speaking about it as we walked down the road, after which we stopped outdoors the taqueria for about half-an-hour. That’s the place we had our second.”

To cook dinner up their knowledge, the staff generated life like photographs by extracting depth maps, which give geometric data, and semantic masks, which label totally different elements of a picture, from the simulated scene. They rapidly realized, nevertheless, that with tight management on the composition of the picture content material, the mannequin would produce comparable photographs that weren’t totally different from one another utilizing the identical immediate. So, they devised a technique to supply numerous textual content prompts from ChatGPT.

This strategy, nevertheless, solely resulted in a single picture. To make quick, coherent movies that function little “experiences” for the robotic, the scientists hacked collectively some picture magic into one other novel method the staff created, referred to as “Goals In Movement.” The system computes the actions of every pixel between frames, to warp a single generated picture into a brief, multi-frame video. Goals In Movement does this by contemplating the 3D geometry of the scene and the relative modifications within the robotic’s perspective.

“We outperform area randomization, a way developed in 2017 that applies random colours and patterns to things within the surroundings, which remains to be thought-about the go-to technique nowadays,” says Yu. “Whereas this method generates numerous knowledge, it lacks realism. LucidSim addresses each variety and realism issues. It’s thrilling that even with out seeing the actual world throughout coaching, the robotic can acknowledge and navigate obstacles in actual environments.”

The staff is especially excited in regards to the potential of making use of LucidSim to domains outdoors quadruped locomotion and parkour, their essential check mattress. One instance is cellular manipulation, the place a cellular robotic is tasked to deal with objects in an open space; additionally, coloration notion is crucial. “At present, these robots nonetheless be taught from real-world demonstrations,” says Yang. “Though gathering demonstrations is straightforward, scaling a real-world robotic teleoperation setup to 1000’s of expertise is difficult as a result of a human has to bodily arrange every scene. We hope to make this simpler, thus qualitatively extra scalable, by transferring knowledge assortment right into a digital surroundings.”

Who’s the actual skilled?

The staff put LucidSim to the check towards another, the place an skilled trainer demonstrates the ability for the robotic to be taught from. The outcomes had been stunning: Robots skilled by the skilled struggled, succeeding solely 15 p.c of the time — and even quadrupling the quantity of skilled coaching knowledge barely moved the needle. However when robots collected their very own coaching knowledge by means of LucidSim, the story modified dramatically. Simply doubling the dataset dimension catapulted success charges to 88 p.c. “And giving our robotic extra knowledge monotonically improves its efficiency — ultimately, the scholar turns into the skilled,” says Yang.

“One of many essential challenges in sim-to-real switch for robotics is reaching visible realism in simulated environments,” says Stanford College assistant professor {of electrical} engineering Shuran Track, who wasn’t concerned within the analysis. “The LucidSim framework supplies a chic resolution through the use of generative fashions to create numerous, extremely life like visible knowledge for any simulation. This work might considerably speed up the deployment of robots skilled in digital environments to real-world duties.”

From the streets of Cambridge to the reducing fringe of robotics analysis, LucidSim is paving the way in which towards a brand new era of clever, adaptable machines — ones that be taught to navigate our complicated world with out ever setting foot in it.

Yu and Yang wrote the paper with 4 fellow CSAIL associates: Ran Choi, an MIT postdoc in mechanical engineering; Yajvan Ravan, an MIT undergraduate in EECS; John Leonard, the Samuel C. Collins Professor of Mechanical and Ocean Engineering within the MIT Division of Mechanical Engineering; and Phillip Isola, an MIT affiliate professor in EECS. Their work was supported, partially, by a Packard Fellowship, a Sloan Analysis Fellowship, the Workplace of Naval Analysis, Singapore’s Defence Science and Expertise Company, Amazon, MIT Lincoln Laboratory, and the Nationwide Science Basis Institute for Synthetic Intelligence and Basic Interactions. The researchers offered their work on the Convention on Robotic Studying (CoRL) in early November.